Fig. A. – The difference between a Feynman diagram for virtual radiation exchange and a Feynman diagram for real radiation transfer in spacetime. Our understanding of the distinction is based on the correct (non-Catt) physics of the Catt anomaly – we see electromagnetic gauge bosons propagating in normal logic signals in computers.

Electron drift current in the direction of the signal occurs in response to the light speed propagating electric field gradient, and is not the cause of it for various reasons: (1) the logic front is propagated via two conductors with electrons going in opposite directions in each, so you have the problem if you claim that electricity is like a like of charges pushed from one end, that in one conductor the electrons are going in the opposite direction to the propagating logic step, (2) the electron drift speed is way too small, and (3) electron drift current anyway carries way, way too little kinetic energy to be able to produce the electromagnetic fields that in turn cause electric currents, on account of the small drift velocities of conduction electrons and on acount of the small masses of conduction electrons, and so this kinetic energy (mv^2) is dwarfed by the energy carried by the electromagnetic field which causes the electron drift current. The electromagnetic field is composed of gauge bosons. Therefore, we can learn about quantum field theory by studying the Catt anomaly. What happens here is that in electricity wherever you get propagation you have charged, massless electromagnetic gauge bosons travelling in two different directions at the same time; the transfer in two directions is physically demanded in order to avert infinite self-inductance due to the motion of massless charges. This has been carefully investigated and leads to solid predictions. “Virtual” radiation like gauge bosons (vector bosons, exchange radiation) in Yang-Mills quantum field theories SU(2) and SU(3) travels between charges (in two directions, i.e. both crom charge 1 to charge 2, and from charge 2 to charge 1 at the same time, so the magnetic fields of the exchange radiations going in opposite directions at the same time on the average have opposite directed curls and thus cancel out, preventing infinite self-inductance problems).

Fig. B. – Electron orbits in a real atom due to chaotic interactions, not smooth curvature. Exchange radiation leads to electromagnetism and gravitation: see the posts https://nige.wordpress.com/2007/06/20/the-mathematical-errors-in-the-standard-model-of-particle-physics/ and https://nige.wordpress.com/about/ for mechanism and some predictions. Other details can be found in other recent posts on this blog, and in the comments sections to those posts (where I’ve placed many of the updates and corrections, to avoid confusion and to preserve a sense of chronology to developments). The real motion of an electron or other particle is simply the sum of all quantum interaction momenta which operate on it, i.e., you need to add up the vectorial contributions of all the impulses the electron comes under from exchange radiations in the vacuum.

Differential equations describing smooth curvatures and continuously variable fields in general relativity and mainstream quantum field theory are wrong except for very large numbers of interactions, where statistically they become good approximations to the chaotic (particle interactions) which are producing accelerations (spacetime curvatures, i.e. forces). See https://nige.wordpress.com/2007/07/04/metrics-and-gravitation/ and in particular see Fig. 1 of the post: https://nige.wordpress.com/2007/06/13/feynman-diagrams-in-loop-quantum-gravity-path-integrals-and-the-relationship-of-leptons-to-quarks/. Think about air pressure as an analogy. Air pressure can be represented mathematically as a continuous force acting per unit area: P = F/A. However, air pressure is not a continuous force, it is due to impulses delivered by discrete random, chaotic strikes by air molecules (travelling at average speeds of 500 m/s in sea level air) against surfaces. If therefore you take a very small area of surface, you will not find a continuous uniform pressure P acting on it. Instead, you will find a series of chaotic impulses due to individual air molecules striking the surface! This is an example of how a useful mathematical fiction on large scales like air pressure, loses its accuracy if applied on small scales. It is well demonstrated by Brownian motion. The motion of an electron in an atom is subjected to the same thing simply because the small size doesn’t allow large numbers of interactions to be averaged out. Hence, on small scales, the smooth solutions predicted by mathematical models are flawed. Calculus assumes that spacetime are endlessly divisible, which is not true when calculus is used to represent a curvature (acceleration) due to a quantum field! Instead of perfectly smooth curvature as modelled by calculus, the path of a particle in a quantum field is affected by a series of discrete impulses from individual quantum interactions. The summation of all these interactions gives you something that is approximated in calculus by the “path integral” of quantum field theory. The whole reason why you can’t predict deterministic paths of electrons in atoms, etc., using differential equations is that their applicability breaks down for individual quantum interaction phenomena. You should be summing impulses from individual quantum interactions to get a realistic “path integral” to predict quantum field phenomena. The total and utter breakdown of mechanistic research in modern physics has instead led to a lot of nonsense, based on sloppy thinking, lack of calculations, and the failure to make checkable, falsifiable predictions and obtain experimental confirmation of them.

Fig. C. – Normally we can ignore equilibrium processes like the radiation we are always emitting and receiving from the environment (because the numbers cancel each other out). Similarly, we can normally not see any net loss of energy from an electron which radiates as it orbits the nucleus, because it is receiving energy in equilibrium with 10^80 charges all radiating throughout the universe. Nutcases who can’t grasp that all electrons behave according to the same basic laws of nature (i.e., the nutcases like Bohr who believed religiously that only one electron would radiate, so it would be out of equilibrium and would spiral into the nucleus) tend to adopt crackpot interpretations of quantum mechanics like the Copenhagen Interpretation, which makes metaphysical claims that are not even wrong (i.e., claims that can’t be falsified even in principle). Don’t listen to those liars and charlatans if you want to learn physics, but you’d better build a shrine to them if you want to become a paid-up member of the modern physics mainstream orthodoxy of charlatans.

(End of 16 January 2008 update.)

This post doesn’t yet summarise the material on this blog. I’ve recently finished with Weinberg’s first two volumes of “The Quantum Theory of Fields”. I don’t find Weinberg’s style and content helpful in volume 1 apart from the very helpful chapters 1 (history of the subject), 11 (one loop radiative corrections, with a nice treatment of vacuum polarization), and 14 (bound states in external fields). In volume 2, I found chapters 18 (renormalization group methods, dealing with the way the bare charge is shielded in QED and augmented in QCD by polarized vacuum, with a logarithmic dependency on collision energy which is a simple function of the distance particles approach one another in scattering interactions), 19 and 21 (symmetry breaking), and 22 (anomalies) of use. Generally, however, in the other chapters (and certainly in volume 3) Weinberg doesn’t follow physical facts but instead launches off into abstract speculative mathematical games, so a better book more generally (tied more firmly at every step to physical facts and not fantasy) is Professor Lewis H. Ryder’s “Quantum Field Theory” 2nd ed., 1996, especially chapters 1-3 and 6-9. (Beware of editor Rufus Neal’s proof-checking; the running header at the top of the pages throughout chapter 3 contains the incorrect spelling ‘langrangian’. Neal once rejected a manuscript of mine, and I’m glad now, seeing that his editorial work is so imperfect!)

It’s obvious that the plan to use SU(2) with massless gauge bosons to unify electromagnetism (two charged massless gauge bosons) and gravity (one massless uncharged gauge boson) is going to require some mathematical work. The idea for this comes from experimental fact: the physical mechanisms proved at https://nige.wordpress.com/about/ and applied to other areas in the last dozen blog posts or so, are predictive, have made predictions subsequently confirmed, and are compatible with the empirically confirmed portions of both quantum field theory and general relativity. It predicts accurately within the accuracy of available data the coupling constants for gravity and electromagnetism from the mechanism with gauge bosons that look like massless versions of the 3 massive weak gauge bosons of SU(2) in the standard model.

It is not a case that we’re proposing as a mere speculative theory that SU(2) is both a theory of weak interactions, quantum gravity and electromagnetism. Instead, it’s simply a case that we naturally end up with SU(2) as the gauge symmetry for electromagnetism-gravity because that’s what the predictive, fact-based mechanism of gauge boson exchange shows to have the right gauge bosons. In other words, we start with facts and end up with SU(2) as a consequence. That’s quite a different approach to what someone in the mainstream would probably do in this area, i.e., starting off speculatively with SU(2) as a guess, and seeing where it leads. But let’s try looking at the whole problem from that angle for a moment.

If we were to guess that U(1)xSU(2) electroweak symmetry breaking were wrong and that the correct model is actually that we lose U(1) entirely and replace the Higgs sector with something better so electric charge is mediated by massless versions of the W+ and W- SU(2) gauge bosons while the graviton is the massless version of the Z, we’d start by doing something very different to anything I’ve done already.

We’d take the existing SU(2) field lagrangian, remove the Higgs field so that the gauge bosons are massless (actually in SU(2) the gauge bosons are naturally massless, so the complexity of the Higgs field has to be added to give mass to the naturally massless weak gauge bosons, to break electroweak symmetry, which by itself should be a very big clue to anyone with sense that maybe massless weak gauge bosons exist at low energies but are manifested as electromagnetism and gravitation), and see about solving that lagrangian to obtain gravity and electromagnetism.

The basic mainstream SU(2) lagrangian (the Weinberg-Salam weak force model) seems to be summarised neatly in section 8.5 (pp. 298-306) of Ryder’s “Quantum Field Theory” 2nd ed. (Ryder’s discussion is far, far more lucid physics than the mathematical junk in Weinberg’s books, nevermind that Weinberg was one of the people who developed the so-called ‘standard model’. Whenever you see hype with theories forced together with a half-baked, untested Higgs mechanism, being grandly called a ‘standard model’ and you see elite physicists being hero-worshipped for it, it smells of a religious consensus or orthodoxy which is stagnating theoretical particle physics with stringy mathematical speculations. Only the tested and confirmed parts of the standard model are fact; the mainstream version of the standard model’s electroweak symmetry breaking Higgs field hasn’t been observed and doesn’t even make precise falsifiable predictions.)

(End of 5 January update)

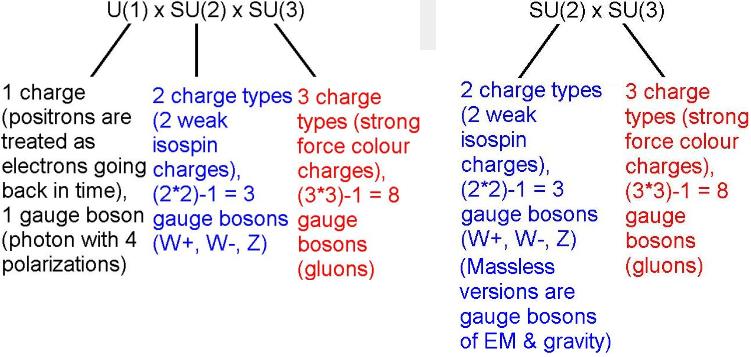

Summary. SU(2) x SU(3), a purely Yang-Mills (non-Abelian) symmetry group is the correct gauge group of the universe. This is based on experimental facts of electromagnetism which are currently swept under the carpet. The mainstream ‘standard model’ is more complex, U(1) x SU(2) x SU(3), and differs from the correct theory of the universe by its inclusion of the Abelian symmetry U(1) to describe electric charge/weak hypercharge, and omitting gravitation. The errors of U(1) x SU(2) x SU(3) are explained in the earlier post Correcting the U(1) error in the Standard Model of particle physics. Professor Baez has an article here, explaining the electroweak group of the standard model U(1) x SU(2).

Fig. 1 – The standard model U(1) x SU(2) x SU(3) seems to produce the right symmetries to describe nature as determined by experiments in particle physics (the existence of mesons containing quark-antiquark pairs is due to SU(2), while the existence of baryons containing triplets of quarks is a consequence of the three colour charges of SU(3); you can’t have more than 3 quarks because there are only 3 colour charges and you would violate the exclusion principle if you duplicated a set of quantum numbers by having more than one quark of a given colour charge present). Some problems with this system are that it includes one long-range force (electromagnetism) but not the other (gravity), and it requires a messy, unpredictive ‘Higgs mechanism’ to make the U(1) x SU(2) symmetry break in accordance to observations. Firstly, only particles with a left-handed spin have any SU(2) isospin charge at all, and secondly, the four gauge bosons of U(1) x SU(2) are only massless at very high energy: the ‘Higgs field’ supposedly gives the 3 gauge bosons of SU(2) masses at low energy, causing them to have short ranges in the vacuum and thus breaking the symmetry which exists at high energy. However, this Higgs theory doesn’t make particularly impressive scientific predictions as it comes in all sorts of versions (having different types of Higgs boson, with different masses). My argument is that instead of having a ‘Higgs field’ that gives mass to all SU(2) gauge bosons at low energy (as in the standard model), the correct theory of mass in nature is that only, say, left-handed versions of those gauge bosons gain masses from the ‘Higgs field’ (which means a different mass-giving field to the mainstream model), and the rest continue existing at low energy and have infinite range, giving rise to electromagnetism (due to two charged massless gauge bosons in the SU(2) symmetry) and gravity (due to the one uncharged massless gauge boson in the SU(2) symmetry). Hence, we lose U(1) from the standard model while gaining falsifiable predictions about gravity and electromagnetism, simply by replacing the ‘Higgs mechanism’ with something radical and much better.![]()

The only difference between the correct theory of the universe, SU(2) x SU(3) and the existing mainstream ‘standard model’ U(1) x SU(2) x SU(3) is the replacement of U(1) with a new version of the Higgs field which makes SU(2) produce both 3 massive (weak gauge bosons) and 3 massless versions of those gauge bosons. The latter triad all have infinite range because they are massless; one is neutral which means that infinite range ‘neutral currents’ cause gravitation, and two are charged which mediate electromagnetic fields from positive and negative charges (these massless propagate – unlike massless monodirectional charged radiation – because they are exchange radiation so the magnetic fields of the charged massless gauge bosons propagating in opposite directions cancel one another). The SU(2) weak isospin charge description remains similar in the new model to the standard model, as does the SU(3) colour charge description.

The essential change is that massless versions of the charged W and neutral Z weak gauge bosons are the correct models for gravitation and electromagnetism. This replaces the existing Higgs field with a version which couples to some gauge bosons in a way which produces the chirality of the weak force (only left-handed fermions experience the weak force; all right-handed spinors have zero weak isotopic charge and thus don’t undergo weak interactions). The U(1) Abelian group is not the right group because it only describes one charge and one gauge boson (in the U(1) electromagnetism theory of the ‘standard model’, positive and negative charges have to be counted as the same thing by treating a positive charge as a negative charge going backwards in time, while the single type of gauge boson in U(1) has to produce for both positive and negative electric fields around charges by having 2 additional – normally unseen – polarizations in additional to the 2 polarizations of the normal photon, which is an ad hoc complexity that is just as ‘not even wrong’ as the idea that positrons are electrons travelling backwards in time).

Detailed predictions. I’m going to add a little to this post each day, mainly improving and clarifying the content of previous blog posts such as this and this, which give detailed predictions.

Update: Kea has posted a picture taken at her University of Canterbury, New Zealand PhD ceremony, here. Her PhD was in ‘Topology in Quantum Gravity’ according to her university page. I hope it is published on arXiv or as a book with some introductory material at a low level, beginning with a quick overview of the technical jargon so that everyone can understand it. Mahndisa is also into abstract mathematics but has started a post discussing the perils of groupthink here. Groupthink is vital to allow communications to proceed smoothly between people: we all have to use the same definitions of words, and the same symbols in mathematics, to reduce the risks of confusion. But where the groupthink involves lots of people being brainwashed with speculations that are wrong, it prevents progress because any advance that involves correcting errors which are widely believed to not be errors (without strong evidence) is prevented.

Feynman, in his writings, gives several examples of this ‘groupthink’ problem. The length of the nose of the Emperor of China is one example. Millions of people are asked to guess the length of the nose of the Emperor of China, without any of them having actually measured it. Take the average and work out the standard deviation, you might get a result like 2.20768 +/- 0.43282 inches. Next, assume that some bright spark actually meets the Emperor of China and measures the length of his nose, and finds it is say 3.4 +/- 0.1 inches (the standard deviation here is probable measurement error due to defining the exact spot where the nose starts on the face). Now, that person has got a serious problem being published in a peer-reviewed journal: his measurement is more than two standard deviations off the prevailing consensus of ignorant opinion. So prejudice due to the assumed priority of historically earlier guesswork or consensus-based guesswork ends up being used to censor out new scientific (measurement and/or observation based) facts!

Now take it to the next level. You choose a million nose experts, who have all had long experience of nose measurement, and you ask them to come up with a consensus for the length of the Emperor of China’s nose, without measuring it. Again, the consensus they arrive at is purely guesswork, and the average may be way off the real figure, so no matter how many experts you ask, it doesn’t help science one little bit: ‘Science is the organized skepticism in the reliability of expert opinion.’ – R. P. Feynman (quoted by Smolin, The Trouble with Physics, U.S. edition, 2006, p. 307).

Against this, many people out there believe that science is a kind of religion, all that matters is the mainstream belief, and facts are inferior to beliefs. They think that the consensus of expert opinion overrides factual evidence from experimental and observational data. They’re right in a political sense but not in a scientific one. Politics is about the prestigious, praiseworthy work involved in getting on the right side of an ignorant mob. Science is just about getting the facts straight.

Update 2: Dr Lubos Motl, the well-known blogger who is a fanatical string theorist and formerly an assistant professor of physics at Harvard, has a new blog post up claiming bitterly that:

‘As far as I know, every single high-energy physicist – graduate student, postdoc, professor – at every good enough place knows that the comments of people like Peter Woit or Lee Smolin about physics are completely worthless pieces of c***. Peter Woit is a sourball without a glimpse of creativity … a typical incompetent, power-thirsty, active moron of the kind who often destroy whole countries if they get a chance to do it.

‘Analogously, Lee Smolin is a prolific, full-fledged crackpot who has written dozens of papers and almost every single one is a meaningless sequence of absurdities and bad science. … everyone in the field knows that. But a vast majority of the people in the field think and say that these two people and their companions don’t matter; they don’t have any influence, and so forth.’

At least Dr Motl is honest about his personal delusions concerning his critics. Most string theorists just ‘stick their heads in the sand’, a course of action that not even an Ostrich really takes, but yet one which is strongly recommended by string theorist Professor Witten:

‘The critics feel passionately that they are right, and that their viewpoints have been unfairly neglected by the establishment. … They bring into the public arena technical claims that few can properly evaluate. … Responding to this kind of criticism can be very difficult. It is hard to answer unfair charges of élitism without sounding élitist to non-experts. A direct response may just add fuel to controversies.’

– Dr Edward Witten, M-theory originator, Nature, Vol 444, 16 November 2006.

This is convenient for Dr Witten, who earlier claimed (delusionally):

‘String theory has the remarkable property of predicting gravity.’

– Dr Edward Witten, M-theory originator, Physics Today, April 1996.

It’s very nice for such people to avoid controversy by ignoring critics. However, Dr Motl is deluded about the particle physics representation theory work of Dr Woit, and probably deluded about some of Dr Smolin’s better work, too.

But we should be grateful to Dr Motl for being open and allowing everyone to see that hype of uncheckable speculations can lead to insanity. Normally critics of mainstream hype campaigns are simply ignored (as recommended by the hype leaders), but in this case everybody can now clearly see the human paranoia and delusion which props up the mainstream 10/11 dimensional speculation and the resulting non-falsifiable landscape of solutions which it leads to.

In other cheerful news, Dr David Wiltshire the University of Canterbury, New Zealand, has had published in Physical Review Letters a paper which attempts to provide a ‘radically conservative solution’ to the mainstream ad hoc theory that the universe is 76% dark energy. Dr Perlmutter’s automated observations of redshift of distant supernova’s (halfway across the universe or more) in 1998 defied the standard prediction from general relativity that the big bang expansion should be slowed down due to gravity at large distances (large redshifts). The data Perlmutter obtained showed that the gravitational retardation was not occurring. Either

-

gravity gets weaker than the inverse square over massive distances in this universe. This is because gravity is mediated by gravitons which get redshifted and thus the quanta lose energy when exchanged between masses which are receding at relativistic velocities, i.e. well apart in this expanding universe, which would reduce the effective value of G over immense distances). Additionally, from empirical facts, the mechanism of gravity depends on surrounding recession of masses around any point. This means that if general relativity is just a classical approximation to quantum gravity (due to the graviton redshift effect just explained, which implies that spacetime is not curved over cosmological distances), we have to treat spacetime as finite and not bounded, so that what you see is what you get and the universe may be approximately analogous to a simple expanding fireball. Masses near the real ‘outer edge’ of such a fireball (remember that since gravity doesn’t act over cosmological distances, there is no curvature over such distances) get an asymmetry in the exchange of gravitons: exchanging them on one side only (the side facing the core of the fireball, where other masses are located). Hence such masses tend to just get pushed outward, instead of suffering the usual gravitational attraction, which is of course caused by shielding of all-round graviton pressure. In such an expanding fireball where gravitation is a reaction to surrounding expansion due to exchange of gravitons, you will get both expansion and gravitation as results of the same fundamental process: exchange of gravitons. The pressure of gravitons will cause attraction (due to mutual shadowing) between masses which are relatively nearby, but over cosmological distances the whole collection of masses will be expanding (masses receding from one another) due to the momentum imparted in the process of exchanging gravitons. I put this idea forward via the October 1996 Electronics World, two years before evidence confirmed the prediction that the universe is not decelerating.

or

-

the ad hoc adjustment to the mainstream general relativity model was to add a small positive cosmological constant to cancel out the non-observed gravitational deceleration predicted by the original Friedmann-Robertson-Walker metric of general relativity. This small positive cosmological constant required that 76% of the universe is ‘dark energy’. Nobody has predicted why this should be so (the mainstream stringy and supersymmetry work predicted a negative cosmological constant, or a massive cosmological constant; not a small positive one).

Dr Wiltshire’s suggestion is something else entirely: that the flaw in cosmological predictions derives from the false assumption of uniform density, where in fact galaxies are found concentrated in dense surface-type membranes on large void bubbles in the universe. Because time flows more slowly in the presence of matter, his theory is that this time dilation explains the apparent discrepancy in redshift results from distant supernovas. Assuming his calculations are correct, ‘This is a radically conservative solution to how the universe works.’

It’s good that Physical Review Letters published it since it is against the mainstream pro-‘dark energy’ orthodoxy. However, I think it is too conservative an answer, in that it doesn’t seem to make the kind of predictions or deliver the kind of understanding that helps quantum gravity. Kea quotes a DARPA mathematical challenge which says: ‘Submissions that merely promise incremental improvements over the existing state of the art will be deemed unresponsive.’ I think that sums up the sort of difficulty that crops up in science. Should difficulties routinely be overcome by adding modifications to existing theories (incremental improvements to the existing state of the art), or should the field be opened up to allow radical empirically based and checkable reformulations of theory to be developed?