Above: Feynman diagrams for the gravity field between two masses in general relativity, spin-2 quantum gravity, and spin-1 quantum gravity (which predicts cosmological acceleration, as discussed later on). For low energy interactions, at energies <0.5 MeV or so, below Schwinger’s threshold cutoff for virtual fermion pair-production in the vacuum, the quantum field theories are very simple and described approximately by inverse square force laws in electromagnetism and gravity. Nevertheless, in each case, virtual bosons (field quanta) mediate the interaction. Feynman in QED (1985) shows how to deal with electromagnetic path integrals at low energy (where the IR cutoff excludes any vacuum pair production or polarization, preventing virtual fermionic spacetime loops in the perturbative expansion to a path integral) by geometric summation of pictorial arrows representing amplitudes for different contributions: he proves that the path integral is not inherently mathematical. The amplitude for any given path of action S, i.e., eiS is just summed as a series of arrows. Feynman argues in his 1965 book Character of Physical Law that the infinite series of terms in a perturbative expansion makes the idea that nature is mathematical in nature crazy. What does nature do when two quarks interact via a gluon exchange event? Do they calculate an infinite series of terms in a perturbative expansion? Does every nucleus contain an infinitely powerful computer? So it’s just absurd to pretend that existing developments in mathematical physics suggest that the universe is mathematical: its no more than an ill-informed prejudice. On the other hand, it is possible to make a simple Feynman graphic calculation for the quantum gravity path integral that does predict things falsifiably. This doesn’t disprove the usefulness of mathematical models in QFT for high energy physics, but it does show that sometimes the only way to make an omelette involves breaking an egg; i.e., you don’t always need to hide behind very complex calculations and to proclaim the unproved dogma that mechanisms don’t exist in the universe. Trying to get quantum gravity by examining only unobservable Planck scale phenomena has been unsuccessful. So why not go about building quantum gravity from low energy gravitational observations which are checkable and essentially non-mathematical (physically simple field quanta exchange without the perturbative corrections for pair production effects needed at high energies, above the IR cutoff)? The discovery of dark energy a.k.a. cosmological acceleration in 1998 has changed the framework of low-energy gravitational phenomenology so that the FRW metric of general relativity, developed from the explicit assumption of Einstein that the Newtonian gravity law is an accurate low energy asymptote, no longer need be taken as bible truth. If the graviton is spin-1, the exchange of gravitons with surrounding masses generates gravity, so there will be limits on the applicability of Newton’s law (based on the solar system and things in it like apples, not dark energy), and cosmological acceleration may be a quantum gravity effect rather than an unexplained lambda-CDM fit. Epicycle type modifications to a theory, such as ad hoc values of a cosmological constant, can model anything but that does not mean that epicycles are physically correct and will lead to progress.

“We don’t know that gravity is strictly an attractive force,” cautions Paul Wesson of the University of Waterloo in Ontario, Canada. He points to the “dark energy” that seems to be accelerating the expansion of the universe, and suggests it may indicate that gravity can work both ways. Some physicists speculate that dark energy could be a repulsive gravitational force that only acts over large scales. “There is precedent for such behaviour in a fundamental force,” Wesson says. “The strong nuclear force is attractive at some distances and repulsive at others.

Hubble’s discovery of the receding universe in 1929 is that the recession velocity of galaxies away from us is

v = HR

where H is Hubble’s number and R is distance. This implies acceleration a = dv/dt. If there is “spacetime” in which light from stars obeys the equation R/t = c, then it follows that

a = dv/dt

= d(HR)/d(R/c)

= Hc

= 6 x 10-10 ms-2.

Alternatively, you can use the product rule of differentiation:

a = dv/dt

= d(HR)/dt

= (H dR/dt) + (R dH/dt)

Since Hubble’s law v = HR = Hct states that v is directly proportional to R or t, it follows that H is a constant with respect to R or ct in observable spacetime (where the distance we see is coupled to time past by the rigid relationship R = ct), so the second term’s gradient dH/dt = 0, although H is not a constant with respect to the expansion of the universe in real time, since the age of the flat universe is T = 1/H. This leaves us

a = H dR/dt

= Hv

where for the very great distances concerned the redshifts are large and recession velocities are relativistic, so v ~ c, giving a ~ Hc.

Another way to derive this, in which time runs forward (rather than backwards with increasing distance from us), is often more acceptable conceptually, and is linked here: https://nige.files.wordpress.com/2009/08/figure-14.jpg (the only difference in the result from the two different derivations is a minus sign, due to the difference in the relationship between time and distance in each derivation).

This is the cosmological acceleration of the receding matter in the universe, implying force F = ma outward and an inward reaction force which is identical according to Newton’s 3rd law, and can only be mediated by gravitons. This makes quantitative predictions (which will be shown later on, below). It’s extremely educational to see people’s reactions to this May 1996 prediction of the acceleration of the universe. String theorist “peer”-reviewers for Classical and Quantum Gravity rejected the prediction because it was part of a paper dealing with a non-string quantum gravity approach; the anonymous peer-review report stated that it did not address problems in string theory, as if that was a valid criticism of the prediction. Similar responses of disinterest were received from other journals. When it was published via the less-appropriate October 1996 issue of Electronics World, and the February 1997 issue of Science World (ISSN 1367-6172), another Electronics World author, Mike Renardson, kindly corresponded but claimed that the predicted acceleration of 6 x 10-10 ms-2 was too small to detect. However, the age of the universe is immense, and so over such very large (cosmological-sized) distances, this small outward acceleration was enough to offset the predicted gravitational deceleration of the universe! This is how Perlmutter and others discovered observationally the acceleration of the universe in 1998. Abuse and insults lacking any scientific substance whatsoever followed every attempt to point out that the acceleration had been accurately predicted. Because of widespread ignorance, I had to first explain spacetime, differentiation (people are happy to use it for well established applications without question, but then doubt calculus when used to make progress on a new problem!), and Hubble’s empirical law, then I had to explain the observations of the cosmological acceleration. Usually, one of these basic foundations would prove a stumbling block before the new work had even been explained. Scientific journalism is so poor that many physicists have no idea of the basics in a real, quantitative sense.

This was possible because all of the media obfuscated the discovery of the cosmological acceleration of the universe by failing to state what it was quantitatively; they were deliberately vague and omitted to state that the outward acceleration of the universe is less than 10-10 of the acceleration due to gravity on the Earth’s surface. Why did they not report the number measured? As always in scientific obfuscation, it was probably considered too technical for readers. As a result, nobody – not even the average physicist – had a clear idea of what had actually been measured. Science is quantitative. Take away the facts, and you are left with arm-waving dogmatic-sounding drivel, which is counter-productive and contrary to real understanding. Political journalists wouldn’t vaguely expect to get away with reporting a national debt vaguely without stating what the number really was, but “scientific” journalists are happy to be pseudo-scientific when it comes to physics! They think that omitting numbers makes it more “user friendly” to readers.

Another objection is the claim that the universe isn’t expanding and Hubble’s redshifts are due to light spontaneously changing frequency. There is no evidence for this; light has never been observed to do this, it slows down in media loaded with electromagnetic fields like a block of glass, but it doesn’t change frequency, and the redshifted galaxy spectra do not exhibit the frequency-dependent degradation effects that occur when dusts redden light. The best example is the cosmic microwave background radiation, which is the most highly redshifted radiation ever observed; it’s also the most perfect Planck radiation spectrum ever observed, proving that the redshift has linearly changed every frequency, unlike the reddening produced when light travels through dust clouds. It’s not possible to accurately explain the redshift data by any known mechanism other than the recession of distant matter. Professor Wright has disproved “tired light” ideas against the empirical Hubble law: http://www.astro.ucla.edu/~wright/tiredlit.htm. Newton’s 2nd and 3rd laws are empirically established. Spacetime is a fact: light from distant stars takes a long time to arrive, so the galaxy will move during that period of time, and the apparent distance in Hubble’s v = HR is therefore only meaningful for time past t = R/c, which is dependent on R. So it’s more sensible to formulate the Hubble law as an acceleration: a = dv/dt = d(HR)/d(R/c) = Hc. This quantitative fact-based prediction of the acceleration of the universe was published via page 893 of Electronics World in October 1996 and the February 1997 issue of Science World ISSN 1367-6172, and was empirically confirmed two years later by Perlmutter and others, see the diagram here (this diagram gives a more rigorous derivation by differentiating time measured from the time of the big bang, running forward, not just spacetime running backwards as we look to greater distances).

In May 1996 we had argued in a preprint submitted to more appropriate journals but rejected by string theorist “peer” reviewers who claimed falsely that it was “speculative”, that this acceleration will be measurable and must be examined with Newton’s 2nd and 3rd laws for its implications on LeSage quantum gravity. Newton’s 2nd law: F = ma where a is the acceleration above and m is the Hubble Space Telescope estimate of the mass of the luminous universe (9 x 1021 solar masses or 3 x 1052 kg, see p5 the NASA report linked here) gives F = ma = 1.8 x 1043 N. This is the radial outward force corresponding to the radial outward acceleration implied by the spacetime equivalent of Hubble’s empirical law. Newton’s 3rd law then shows that there must be an equal and opposite force, i.e. and inward force which according to the possibilities suggested by the Standard Model and gravitation, is mediated by spin-1 gravitons in a LeSage mechanism. Give fundamental particles a graviton interaction cross-section equal to the cross-sectional area for their black-hole event horizon (for which we have other independent evidence, see the earlier post linked here), and you get a quantitative prediction of G. So this mechanism predicted things correctly, and the cosmological acceleration was predicted in 1996 and published via Electronics World October 1996 page 893 and Science World ISSN 1367-6172, February 1997, years ahead of its observational discovery by two independent groups of astronomers.

In May 1996 we had argued in a preprint submitted to more appropriate journals but rejected by string theorist “peer” reviewers who claimed falsely that it was “speculative”, that this acceleration will be measurable and must be examined with Newton’s 2nd and 3rd laws for its implications on LeSage quantum gravity. Newton’s 2nd law: F = ma where a is the acceleration above and m is the Hubble Space Telescope estimate of the mass of the luminous universe (9 x 1021 solar masses or 3 x 1052 kg, see p5 the NASA report linked here) gives F = ma = 1.8 x 1043 N. This is the radial outward force corresponding to the radial outward acceleration implied by the spacetime equivalent of Hubble’s empirical law. Newton’s 3rd law then shows that there must be an equal and opposite force, i.e. and inward force which according to the possibilities suggested by the Standard Model and gravitation, is mediated by spin-1 gravitons in a LeSage mechanism. Give fundamental particles a graviton interaction cross-section equal to the cross-sectional area for their black-hole event horizon (for which we have other independent evidence, see the earlier post linked here), and you get a quantitative prediction of G. So this mechanism predicted things correctly, and the cosmological acceleration was predicted in 1996 and published via Electronics World October 1996 page 893 and Science World ISSN 1367-6172, February 1997, years ahead of its observational discovery by two independent groups of astronomers.

Above: Perlmutter’s discovery of the acceleration of the universe, based on the redshifts of fixed energy supernovae, which are triggered as a critical mass effect when sufficient matter falls into a white dwarf. A type Ia supernova explosion, always yielding 4 x 1028 megatons of TNT equivalent, results from the critical mass effect of the collapse of a white dwarf as soon as its mass exceeds 1.4 solar masses due to matter falling in from a companion star. The degenerate electron gas in the white dwarf is then no longer able to support the pressure from the weight of gas, which collapses, thereby releasing enough gravitational potential energy as heat and pressure to cause the fusion of carbon and oxygen into heavy elements, creating massive amounts of radioactive nuclides, particularly intensely radioactive nickel-56, but half of all other nuclides (including uranium and heavier) are also produced by the ‘R’ (rapid) process of successive neutron captures by fusion products in supernovae explosions. The brightness of the supernova flash tells us how far away the Type Ia supernova is, while the redshift of the flash tells us how fast it is receding from us. That’s how the cosmological acceleration of the universe was measured. Note that “tired light” fantasies about redshift are disproved by Professor Edward Wright on the page linked here.

There’s no speculation in any of these fact based falsifiable predictions which have been observationally confirmed, contrasted 10/11 dimension string theory fantasies about unification at the Planck scale. General relativity is a classical theory which isn’t applicable to quantum gravitation for many reasons; the whole concept of representing gravitation using spacetime curvature, i.e. the continuous Ricci tensor rather than discrete accelerations, is contrary to quantum field theory and thus is just a classical approximation at best. The general relativity field equation then insists that the source of gravitation must be likewise a continuous tensor, the stress-energy tensor. In fact, it is obvious that this is wrong because all energy and matter (the source of gravitation) occurs in discrete particles, not a true continuous distribution. If you look at the general relativity textbooks, they have to postulate an imaginary “perfect fluid” to represent matter and energy in the stress-energy tensor, just so that they can solve the equation, giving a smooth (differential) spacetime curvature. This is vital to getting solutions. E.g., Bernard F. Schutz, A First Course in General Relativity (Cambridge University Press, 1986, pp. 89-90), which states: “In many interesting situations… the source of the gravitational field can be taken to be a perfect fluid…. A fluid is a continuum that ‘flows’… A perfect fluid is defined as one in which all antislipping forces are zero, and the only force between neighbouring fluid elements is pressure.”

The reason for such fanciful modelling is that the stress-energy tensor, Tab, is a rank-2 tensor and you need to put the source of gravitation (energy, mass, momentum, stress) in a way that can be represented by second order differential equations. You can’t have “particles”, discrete quanta, or singularities as the source of the gravitational field or you won’t get a nice smooth equation for the curvature as the solution. So it’s just a classical theory of gravitation. It’s also clear that Maxwell’s equations are all rank-1 tensors, because that’s the way the electromagnetic field is defined. E.g., field lines diverge outwards from an electric charge by a first-order (rank-1) gradient equation. If Maxwell’s equations instead defined fields as curvatures or accelerations (not as Faraday field line divergences or curls) they would be rank-2 equations like general relativity, and the physical relationship would not be obfuscated by the differing definitions of “field” used in the mathematical models.

The whole spacetime curvature modelling procedure for accelerations is such an artificial, classical falsely non-quantized model, it’s hard for me to know how anyone can dupe themselves into believing in general relativity as the correct mathematical framework for quantum gravity. I know it gives the right answers that differ from the supposed predictions of Newtonian gravitation (which is actually a bit vague on the issue of the deflection of light by gravity). But the departure of general relativity from Newtonian gravity, apart from the false continuous curvature tensor formulation, is just due to making gravitation consistent with the conservation of mass-energy for the gravitational field itself when dealing with velocities near those of light.

A bullet fired past a mass with a speed small compared to that of light will be speeded up as well as slightly deflected towards the mass, due to gravity. When you work it out, half of the energy gained by the non-relativistic bullet as it passes a mass (from a great distance of approach) is used to speed up the bullet, and the other half of the energy is used to deflect the direction of the bullet slightly towards the mass. The photon behaves as a mass, m = E/c2. But a photon is unable to speed up. So instead of only 50% of the energy it gains from its approach to the mass being used to speed up the photon and the other 50% being used to deflect its direction, 100% of the energy (twice as much as the supposed Newtonian prediction) is used to deflect the photon. Photons can’t speed up; they only deflect.

So there is a physical, non-mathematical explanation of supposedly special aspect of general relativity. Feynman in his Lecture on Physics gives a nice similar discussion for “curvature” in terms of the contraction of radius produced by the gravitational field. It’s like the Lorentz contraction of a moving object; gravitation contracts the dimensions of a mass by the amount (1/3)MG/c2 which is 1.5 millimetres for the Planet Earth, but more for bigger masses. Circumference is unaffected since it is perpendicular to radius, so you get a distortion of Euclidean geometry which can be “explained” by a fourth dimension. However, the best explanation is a radial contraction caused by the pressure of exchanged gravitons acting on the fundamental particles in a mass, like a fluid pressure causing an object to contract. The gravitational potential energy gained by a mass falling from an infinite distance to the Earth’s surface is equivalent to kinetic energy needed to reach Earth’s escape velocity, so by Einstein’s equivalence principle between inertial and gravitational mass, energy which increases the mass and causes a contraction and time-dilation by analogy to the Lorentz transformation of special relativity with the escape velocity placed into it as v = (2GM/x)1/2; because for a mass the gravitational field extends in three spatial dimensions, the contraction of distance is spread over three degrees of freedom so the time-dilation expanded by the binomial theorem to the first couple of terms is [1 – 2GM/(xc2)]1/2 ~ 1 – GM/(xc2), while the radial distance contraction for a spherical mass is (1/3)MG/c2 = 1.5 mm for Earth’s radius. (Full derivation and discussion is linked here.)

The velocity needed to escape from the gravitational field of a mass (ignoring atmospheric drag), beginning at distance x from the centre of mass, by Newton’s law will be v = (2GM/x)1/2, so v2 = 2GM/x. The situation is symmetrical; ignoring atmospheric drag, the speed that a ball falls back and hits you is equal to the speed with which you threw it upwards (the conservation of energy). Therefore, the energy of mass in a gravitational field at radius x from the centre of mass is equivalent to the energy of an object falling there from an infinite distance, which by symmetry is equal to the energy of a mass travelling with escape velocity v. By Einstein’s principle of equivalence between inertial and gravitational mass, this gravitational acceleration field produces an identical effect to ordinary motion. Therefore, we can place the square of escape velocity (v2 = 2GM/x) into the Fitzgerald-Lorentz contraction, giving g = (1 – v2/c2)1/2 = [1 – 2GM/(xc2)]1/2.

However, there is an important difference between this gravitational transformation and the usual Fitzgerald-Lorentz transformation, since length is only contracted in one dimension with velocity, whereas length is contracted equally in 3 dimensions (in other words, radially outward in 3 dimensions, not sideways between radial lines!), with spherically symmetric gravity. Using the binomial expansion to the first two terms of each: Fitzgerald-Lorentz contraction effect: g = x/x0 = t/t0 = m0/m = (1 – v2/c2)1/2 = 1 – ½v2/c2 + … . Gravitational contraction effect: g = x/x0 = t/t0 = m0/m = [1 – 2GM/(xc2)]1/2 = 1 – GM/(xc2) + …, where for spherical symmetry ( x = y = z = r), we have the contraction spread over three perpendicular dimensions not just one as is the case for the FitzGerald-Lorentz contraction: x/x0 + y/y0 + z/z0 = 3r/r0. Hence the radial contraction of space around a mass is r/r0 = 1 – GM/(xc2) = 1 – GM/[(3rc2]. Therefore, clocks slow down not only when moving at high velocity, but also in gravitational fields, and distance contracts in all directions toward the centre of a static mass. The variation in mass with location within a gravitational field shown in the equation above is due to variations in gravitational potential energy. The contraction of space is by (1/3) GM/c2. This physically relates the Schwarzschild solution of general relativity to the special relativity line element of spacetime.

Richard P. Feynman, November 1964 Messenger Lectures, The Character of Physical Law (also published in book form), http://quantumfieldtheory.org/:

‘What does the planet do? Does it look at the sun, see how far away it is, and decide to calculate on its internal adding machine the inverse of the square of the distance, which tells it how much to move? This is certainly no explanation of the machinery of gravitation!

‘It always bothers me that, according to the laws as we understand them today, it takes a computing machine an infinite number of logical operations to figure out what goes on in no matter how tiny a region of space, and no matter how tiny a region of time. [There are an infinite series of terms in the perturbative expansion to a path integral, which can’t all be evaluated; each term corresponds to one Feynman diagram. For low-energy physics, all of the important phenomena correspond to merely the first Feynman diagram, a simple tree branch shape with no spacetime loops which become important at high energy, above the IR or low-energy cutoff which corresponds to Schwinger’s threshold field strength for pair production and annihilation operators to begin to have an effect. Clearly in the real world, an infinite number of Feynman diagrams are not all contributing in the smallest space; this fact is demonstrated by the need for a high-energy UV cutoff to suppress pair production with infinite momenta at the smallest distances, renormalizing the charge by limiting the quantum field effect from the pairs of virtual fermionic charges. However, the UV cutoff used in renormalization, while eliminating the infinite momenta from extremely high energy field phenomena, does not prevent the perturbative expansion having an infinite series of terms. Renormalization still leaves you with an infinite series of different loop-filled Feynman diagram terms in the expansion for energies below the UV cutoff energy.] How can all that be going on in that tiny space? Why should it take an infinite amount of logic to figure out what one tiny piece of spacetime is going to do? So I have often made the hypothesis that ultimately physics will not require a mathematical statement, that in the end the machinery will be revealed, and the laws will turn out to be simple, like the chequer board with all its apparent complexities.’

Frank Wilczek states that his fellow Nobel Laureate Feynman became a closet ether believer when he couldn’t get rid of vacuum interactions:

“As for Feynman … He told me he lost confidence in … emptying space when he found that both his mathematics and experimental facts required the kind of vacuum polarization modification of electromagnetic processes depicted – as he found it, using Feynman graphs … the influence of one particle on another is conveyed by the photon … the electromagnetic field gets modified by its interaction with a spontaneous fluctuation in the electron field – or, in other words, by its interaction with a virtual electron-positron pair. In describing this process, it becomes very difficult to avoid reference to space-filling fields. The virtual pair is a consequence of spontaneous activity in the electron field.”

– Frank Wilczek, “The Lightness of Being: Mass, Ether, and the Unification of Forces”, Basic Books, N.Y., 2008, p. 89.

“Many condensed matter systems are such that their collective excitations at low energies can be described by fields satisfying equations of motion formally indistinguishable from those of relativistic field theory. … a real Lorentz-FitzGerald contraction takes place, so that internal observers are unable to find out anything about their ‘absolute’ state of motion. … an effective but perfectly defined relativistic world can emerge in a fishbowl world situated inside a Newtonian (laboratory) system. …

“… Remarkably, all of relativity (at least, all of special relativity) could be taught as an effective theory by using only Newtonian language. … The ether theory had not been disproved, it merely became superfluous. Einstein realised that the knowledge of the elementary interactions of matter was not advanced enough to make any claim about the relation between the constitution of matter (the ‘molecular forces’), and a deeper layer of description (the ‘ether’) with certainty.”

Feynman makes the complaint that the perturbative expansion for any QFT calculation has an infinite number of terms, corresponding to an infinite number of Feynman diagrams. So if nature is really mathematical in accordance with the laws known today, each quark is capable of calculating infinite numbers of terms in QFT calculations to determine its cross-section for a particular interaction with gluons. There are many people out there who write about parallel universes from non-relativistic, physically false “first-quantization” (pre-QFT) quantum mechanics errors, but there is no scientific evidence and such theories are extraordinarily expensive in terms of making extravagant assumptions for nothing falsifiably predictive in return. There is no wavefunction collapse because in QFT (unlike 1st quantization Heisenberg/Schroedinger QM) the uncertainty principle isn’t directly modelling the uncertainty in the position-momentum uncertainty of a real particle moving in a classical Coulomb field; instead, the uncertainty principle works indirectly, making the field quanta randomly interact with the real electron, thereby imparting uncertainty by a simple physical mechanism akin to the effect of Brownian motion of <5 microm diameter dust particles due to individual air molecule impacts at random. Field quanta are created within 33 fm of real unit charges by pair-production and annihilation where the field strength is above Schwinger’s 1.3 x 1018 v/m IR cutoff for virtual fermion pair production. The wavefunction of a real particle leads to a false model of the motion of the particle, in which a measurement “collapses” the indeterminancy to a definite result. The indeterminancy is not inherently in the real particle; it’s in the randomness of the interactions with the non-classical quantum field around it. Similarly, the chaotic motion of a <5 micron dust particle is not proof that you need to model it by a Schroedinger equation with a wavefunction that collapses when measured; that model would only be needed if you falsely ignored the air molecule bombardments at random and falsely treated the air as being a classical continuous, non-random, pressure at all scales. This is precisely Schroedinger’s error; he falsely kept the Coulomb field that binds the electron to the nucleus classical and ignored the field quanta randomness, and was led to a model in which the chaotic motion was somehow intrinsically uncertain without a mechanism (the Heisenberg uncertainty principle). Actually the uncertainty principle is a useful model for the randomness of light-velocity 2nd quantization field quanta impact effects like fundamental forces:

F = dp/dt

where c = dr/dt for light velocity quanta, so that dt = dr/c. Hence

F = c*dp/dr

Now we introduce the “uncertainty principle” for the light velocity field quanta, in the form p*r = h-bar (from Heisenberg, applied to the offshell field quanta rather than to onshell or “real” particles), which implies p = h-bar/r. Hence

F = c*d(h-bar/r)/dr = -c*h-bar/r2

The production of the inverse-square force law in this simple 2nd quantization calculation indicates the validity of this model to the real world. The actual force of this simple calculation is a factor of the Sommerfeld constant, 137.036…, larger than that given by Coulomb’s law for two electrons or two protons (unit charges). The reason is that this is the fundamental force strength without the shielding due to vacuum polarization withn 33 fm of the particle (above the IR cutoff on the running coupling). Penrose suggests (Road to Reality that the full vacuum polarization shielding factor (the vacuum is only polarized between UV to IR cutoffs, i.e. from the grain size up to 33 fm) is only the square root of the 137 number because the equation for that number contains the square of the electronic charge, and this suggests to Penrose that 137 is proportion to the square of the charge, thus the charge is proportional to the square root of 137.

As the formula above shows, the fundamental QFT force strength is 137 times higher than the Coulomb law, indicating that the bare-core electronic charge (if the vacuum polarization veil could be removed completely) for the product of two unit charges in Coulomb’s law is 137 = Fshielding factor2 so that as Penrose suggests, the bare core charge is 11.7 times times the value of the charge we observe in low-energy physics (below the IR cutoff, or beyond 33 fm from a unit charge).

This 2nd quantization Dirac/Feynman necessity (discrediting non-relativistic 1st quantization, i.e. Heisenberg/Schroedinger QM) has been known since the development of gauge theory in the 1940s. Proponents of ignorance just “explain away” problems by arm waving anthropic principles, without doing real science, the first basis of which is to steer clear of prejudices like the anthropic principle, and to stick to falsifiable predictions.

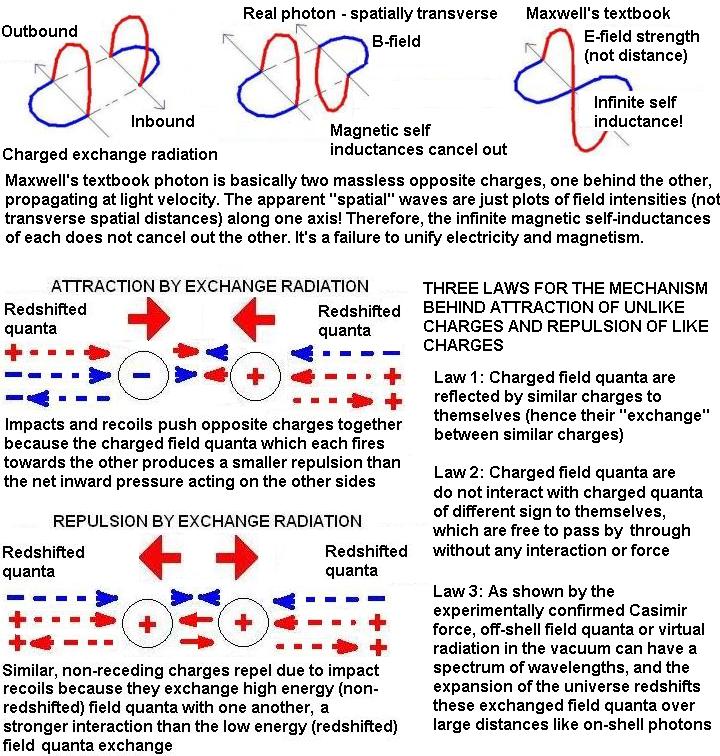

Catt’s model of electric charge as the superposition of reciprocating TEM waves or Poynting vectors describes the exchange of electromagnetic energy. Catt’s experimentally based model of a “contrapuntal” charged capacitor is as follows, from the page http://www.ivorcatt.com/1_3.htm:

Thus, there is an equilibrium of “field quanta” flowing in opposite directions to sustain the electric fields of a “steady electric charge”, with as much energy going one way as another at light velocity, so that the magnetic field vectors of the Poynting vectors cancel out as shown above, while the electric fields automatically add and don’t cancel. Catt states http://www.ivorcatt.com/1_3.htm :

“Let us summarize the argument which erases the traditional model;

a) Energy current can only enter a capacitor at the speed of light.

b) Once inside, there is no mechanism for the energy current to slow down below the speed of light.

c) The steady electrostatically charged capacitor is indistinguishable from the reciprocating, dynamic model.

d) The dynamic model is necessary to explain the new feature to be explained, the charging and discharging of a capacitor, and serves all the purposes previously served by the steady, static model.”

Catt has made the error of not distinguishing between macroscopic and microscopic phenomena; therefore he tries to use the experiments (for the vital experiment see this page of his article from the December 1980 issue of Wireless World, http://www.ivorcatt.co.uk/97rdeat2.jpg ) justifying the arguments above to “replace” electric charge with contrapuntal flows of light speed charged electromagnetic radiation, instead of seeing that his experiments merely tell us about the the electric field features observed conventionally and described as being static “charge”, such as a charged electron. Electric fields are composed of charged radiations described by the Poynting vector.

If you move relative to such a charge, the motions of the two flows of energy no longer cancel out the magnetic field perfectly, so some of the magnetic field component (the spin of the field quanta which produce the torque of the field) becomes apparent. Previously invisible magnetic field energy thus becomes visible.

An electron is a fermion with a spin action of 1/2 in quantum action units of h/(twice Pi), or h-bar. A photon is a boson with a spin of 1 in the same units. A neutral photon or W0 boson contains equal amounts of positive and negative electric field (or effective electric “charges” which cancel out), and would therefore seem composed in some sense of two fermion-like (charged) components each of spin 1/2, which add up to 1, rather than cancelling out, as you might expect.

If we just look at magnets, with no apparent electric fields present, you get a situation where there are equal amounts of positive and negative electrically charged but massless field quanta being exchanged, so the electric fields cancel out, but magnetic fields don’t cancel.

A charged photon or a W+ or W– boson in SU(2) weak isospin theory only exists as exchange radiation, travelling both ways between charges (from one charge to another and vice versa, simultaneously), so that the superimposed magnetic field cancel out like Catt’s trapped Poynting energy flows in a charged capacitor.

See Catt’s page http://www.ivorcatt.com/6_2.htm for the infinite magnetic self-inductance problem with the one-way flow of charge: “The self inductance of a long straight conductor is infinite. This is a recurrence of Kirchhoff’s First Law, that electric current cannot be sent from A to B. It can only be sent from A to B and back to A.”

This reduces the Yang-Mills equations of an SU(2) gauge theory to the Abelian (Maxwellian) gauge theory of electromagnetism, conventionally represented by U(1). In other words, U(1) is an approximation to SU(2) which holds when the mass of the 3 field quanta of SU(2) is zero. This is because the additional term in the Yang-Mills equations, making them differ from Maxwell’s equations, is a term for charge transfer by the field quanta exchange process, which isn’t observed in electromagnetism; so there is a physical reason why electromagnetism is described by an SU(2) Yang-Mills theory but in practice the Yang-Mills equations cancel down to the Abelian U(1) type Maxwell equations. By ignoring the subtle physical mechanism of infinite self-inductance and magnetic field cancellations for equilibrium exchange processes which are at work here, the conventional approach of looking solely at the mathematics alone will mislead you into seeing a distinction between Abelian and Yang-Mills gauge theories that is not really fundamental. The whole difference is the ability of field quanta to convey a net flow of charge; in electromagnetism this is prevented not by the fundamental mathematical gauge gruop being U(1) rather than SU(2), but is rather prevented by the infinite self-inductance of charged field quanta, which permits them to be exchanged at equilibrium rates only, producing a result which makes the real SU(2) nature of electrmagnetism look deceptively like a U(1) Abelian theory.

From Catt, we know the reason why Yang-Mills SU(2) charge transfer effects are not observed in electromagnetism although it is observed in weak interactions mediated by massive field quanta: the reason is that the infinite magnetic self-inductance for light velocity massless charged field quanta prevents any net (one-way) flow of charge field quanta (TEM waves or Poynting vector energy). Such energy in electromagnetic fields can only flow both ways simultaneously, in equilibrium, to cancel out the magnetic field vectors.

So the U(1) electromagnetic component of the Standard Model of particle physics is just an approximation for massless field quanta to a true underlying SU(2) electromagnetic theory. This completely changes the unification between electromagnetic interactions and SU(2) weak isospin interactions in quantum field theory, because we then have electromagnetism really represented by SU(2), so there is a deep similarity between electromagnetism and the left-handed SU(2) weak interaction. The only difference is that SU(2) of electromagnetism involves massless versions of the 3 field quanta involved in the SU(2) weak isospin theory. Mass is given to the field quanta of left-handed SU(2) weak isospin, but not to the electromagnetic SU(2) field quanta. This accounts for the differences in the fields, such as the fact that the weak isospin field with its massive field quanta can deliver net charge but the massless charged quanta of electromagnetism can’t, why the weak isospin field is left-handed, and why the weak field is relatively weak (the 80 and 91 GeV massiveness of the weak field quanta slow them down compared to light velocity electromagnetic field quanta, as well as limiting their range unlike the unlimited range of electromagnetic field quanta, so they deliver impulses to charges less frequently than light velocity quanta, and thus the forces resulting are much weaker).

Maxwell’s equations shouldn’t be formulated as rank-1 tensors in terms of the Faraday concept of field lines, which are unobservables. I think Maxwell’s equations need to be replaced with rank-2 tensors dealing with the electromagnetic field in terms of accelerative spacetime curvature, like gravitation in general relativity. Then it will be possible to compare the general relativity and electromagnetic equations more usefully. Most of the mathematical confusion and lack of progress in unifying electromagnetism and gravitation stems from the differing definition of field in each case, leading to rank-1 (simple gradients and curls) equations for electromagnetism but rank-2 (accelerations and curvatures) equations for gravitation. There is no electric charge of the electron or any other particle observed; you just observe mass, spin, and electric fields. Nobody has ever seen “electric charge”, just static electric fields. Therefore in pair-production from photons with energy exceeding the rest mass of a fermion-antifermion pair, it is not necessary for electric charge to be created: the photon that disappears already has an electric field oscillating from positive to negative in sign, so the sum is zero. So when a photon undergoes pair production, there is not necessarily the creation of “charge”. The electric field energy from the photon simply separates into positive and negative components which become bound to two rest masses. This affects the Dirac ‘sea’ which is now just a sea of virtual masses (gravitational charges with only one sign, since all observed gravitational charges fall in the same direction), not virtual electric charges. So the pair production event involves the electric field of a photon being transferred to particles of mass; this gives those particles of mass an electric charge and weak isospin charge if they are left-handed in spin (for weak nuclear force interactions).

There is a close connection between gravity and weak isospin, since in this model all masses are derived from the 91 GeV Z0 massive weak gauge bosons coupling to particles with weak hypercharge. The different ways the different numbers of Z0 particles created in the vacuum can couple to fermions is what creates the spectrum of different masses we observe for leptons and quarks, as predicted in previous posts. The Z0 is the product of Weinberg mixing of the U(1) hypercharge/gravitational boson with the neutral SU(2) boson, W0. By contrast, in the existing non-gravity Standard Model, U(1) hypercharge when mixed with SU(2) gives the electromagnetic photon, but in our new model the mixing gives the graviton, and U(1)’s charge is not electric charge but mass (gravitational charge).

In the Standard Model, SU(2) when mixed with U(1) gives the two weak isospin charges and the three massive weak bosons (one electrically neutral Z0 and two electrically charged W’s), but in our model this only happens to the left-handed fraction due to mixing with the gravitational U(1) charge (mass), and the right-handed fraction of the SU(2) field bosons remain massless and therefore you have electrically charged massless field quanta which produce the electromagnetic field.

Electric “charges” are produced by the positively charged field quanta around a positron and negatively charged field quanta around an electron. In the Standard Model, U(1) cannot model electromagnetic fields with simply a virtual (gauge boson) 2-polarization photon, but requires a special photon with 4-polarizations. In our model, differing slightly from the Standard Model QED theory, the 2 extra “polarizations” are positive and negative field sign. Remember, the difference between the positron and electron is just the sign of the field, because so far nobody has seen electric “charge” (currently presumed by the mainstream to be a Planck length 6 dimensional string); they have only seen effects from the electric fields bound to rest masses. The concept of “electric charge” is fine when you remember that you are describing fields which are measurable, but is pseudoscience if you take it for granted (Phlogiston and Caloric are prior examples of models which became mistaken for realities before they all the evidence was in; simply printing hype in a textbook and teaching it doesn’t prove it is science).

Path integral simplicity for the exchange of field quanta in low energy quantum gravity applications

Path integral simplicity for the exchange of field quanta in low energy quantum gravity applications

Feynman’s book QED explains how to do the path integral approximately without using formal calculus. He gives the rules simply so you can draw arrows with similar length but varying directions, on paper, to represent the complex amplitudes for different paths light can take through a glass lens, and the result is that paths well off the path of least time cancel out efficiently, but those near it reinforce each other. Thus you recover classical laws of reflection and refraction. He can’t and doesn’t apply such simple graphical calculations to HEP situations above the field’s IR cutoff, where there is pair production occurring leading to a perturbative expansion for an infinite series of different possible Feynman diagrams, but the graphical application of path integrals to the simple low energy physics phenomena gives the reader a neat grasp of principles. This applies to low energy quantum gravitational phenomenon just as it does to electromagnetism.

This is evidence for a spin-1 LeSage graviton (ignored by Pauli and Fierz when first proposing that the quanta of gravitation has spin-2) in the Hubble recession of galaxies which implies cosmological acceleration a = dv/dt = d(HR)/d(R/c) = Hc, obtained in May 1996 from the Hubble relationship v = HR, simply by arguing that spacetime implies that the recession velocity is not just varying with apparent distance, but with time, thus it is an effective acceleration (we published this via p893 of the October 1996 issue of Electronics World and also the February 1997 issue of Science World, ISSN 1367-6172, after string theory reviewers rejected it from more specialized and appropriate journals, without giving any scientific reasons whatsoever).

This isn’t based on speculations, cosmological acceleration has been observed since 1998 when CCD telescopes plugged live into computers with supernova signature recognition software detected extremely distant supernova and recorded their redshifts (see the article by the discoverer of cosmological acceleration, Dr Saul Perlmutter, on pages 53-60 of the April 2003 issue of Physics Today, linked here). The outward cosmological acceleration of the 3 × 1052 kg mass of the 9 × 1021 observable stars in galaxies observable by the Hubble Space Telescope (page 5 of a NASA report linked here), is approximately a = Hc = 6.9 x 10-10 ms-2 (L. Smolin, The Trouble With Physics, Houghton Mifflin, N.Y., 2006, p. 209), giving an immense outward force under Newton’s 2nd law of F = ma = 1.8 × 1043 Newtons. Newton’s 3rd law gives an equal inward (implosive type) reaction force, which predicts gravitation quantitatively. What part of this is speculative? Maybe you have some vague notion that scientific laws should not for some reason be applied to new situations, or should not be trusted if they make useful predictions which are confirmed experimentally, so maybe you vaguely don’t believe in applying Newton’s second and third law to masses accelerating at 6.9 x 10-10 ms-2! But why not? What part of “fact-based theory” do you have difficulty understanding?

It is usually by applying facts and laws to new situations that progress is made in science. If you stick to applying known laws to situations they have already been applied to, you’ll be less likely to observe something new than if you try applying them to a situation which nobody has ever applied them to before. We should apply Newton’s laws to the accelerating cosmos and then focus on the immense forces and what they tell us about graviton exchange.

Above: The mainstream 2-dimensional ‘rubber sheet’ interpretation of general relativity says that mass-energy ‘indents’ spacetime, which responds like placing two heavy large balls on a mattress, which distorts more between the balls (where the distortions add up) than on the opposite sides. Hence the balls are pushed together: ‘Matter tells space how to curve, and space tells matter how to move’ (Professor John A. Wheeler). This illustrates how the mainstream (albeit arm-waving) explanation of general relativity is actually a theory that gravity is produced by space-time distorting to physically push objects together, not to pull them! (When this is pointed out to mainstream crackpot physicists, they naturally freak out and become angry, saying it is just a pointless analogy. But when the checkable predictions of the mechanism are explained, they may perform their always-entertaining “hear no evil, see no evil, speak no evil” act.)

Above: LeSage’s own illustration of quantum gravity in 1758. Like Lamarke’s evolution theory of 1809 (the one in which characteristics acquired during life are somehow supposed to be passed on genetically, rather than Darwin’s evolution in which genetic change occurs due to the inability of inferior individuals to pass on genes), LeSage’s theory was full of errors and is still derided today. The basic concept that mass is composed of fundamental particles with gravity due to a quantum field of gravitons exchanged between these fundamental particles of mass, is now a frontier of quantum field theory research. What is interesting is that quantum gravity theorists today don’t use the arguments used to “debunk” LeSage: they don’t argue that quantum gravity is impossible because gravitons in the vacuum would “slow down the planets by causing drag”. They recognise that gravitons are not real particles: they don’t obey the energy-momentum relationship or mass shell that applies to particles of say a gas or other fluid. Gravitons are thus off-shell or “virtual” radiations, which cause accelerative forces but don’t cause continuous gas type drag or the heating that occurs when objects move rapidly in a real fluid. While quantum gravity theorists realize that particle (graviton) mediated gravity is possible, LeSage’s mechanism of quantum gravity is still as derided today as Lamarke’s theory of evolution. Another analogy is the succession from Aristarchus of Samos, who first proposed the solar system in 250 B.C. against the mainstream earth-centred universe, to Copernicus’ inaccurate solar system (circular orbits and epicycles) of 1500 A.D. and to Kepler’s elliptical orbit solar system of 1609 A.D. Is there any point in insisting that Aristarchus was the original discoverer of the theory, when he failed to come up with a detailed, convincing and accurate theory? Similarly, Darwin rather than Lamarke is accredited with the theory of evolution, because he made the theory useful and thus scientific.

Since 1998, more and more data has been collected and the presence of a repulsive long-range force between masses has been vindicated observationally. The two consequences of spin-1 gravitons are the same thing: distant masses are pushed apart, nearby small masses exchange gravitons less forcefully with one another than with masses around them, so they get pushed together like the Casimir force effect.

Using an extension to the standard “expanding raisin cake” explanation of cosmological expansion, in this spin-1 quantum gravity theory, the gravitons behave like the pressure of the expanding dough. Nearby raisins have less dough pressure between them to push them apart than they have pushing in on them from expanding dough on other sides, so they get pushed closer together, while distant raisins get pushed further apart. There is no separate “dark energy” or cosmological constant; both gravitation and cosmological acceleration are effects from spin-1 quantum gravity (see also the information in an earlier post, The spin-2 graviton mistake of Wolfgang Pauli and Markus Fierz for the mainstream spin-2 errors and the posts here and here for the corrections and links to other information).

As explained on the About page (which contains errors and needs updating, NASA has published Hubble space telescope estimates of the immense amount of receding matter in the universe, and since 1998 Perlmutter’s data on supernova luminosity versus redshift have shown the amount of the tiny cosmological acceleration, so the relationship in the diagram above predicts gravity quantitatively, or you can you normalize it to Newton’s empirical gravity law so it then predicts the cosmological acceleration of the universe, which it has done since publication in October 1996, long before Perlmutter confirmed the predicted value (both are due to spin-1 gravitons).

Elitist hero worship of string theory hero Edward Witten by string theory critic Peter Woit

“Witten’s work is not just mathematical, but covers a lot of ground. The more mathematical end of it has been the most successful, but that’s partly because, in the thirty-some years of his career, no particle theorist at all has had the kind of success that leads to a Nobel Prize. If Witten had been born ten-twenty years earlier, I’d bet that he would have played some sort of important role in the development of the Standard Model, of a sort that would have involved a Nobel prize.” – Peter Woit, Not Even Wrong blog

With enemies like Peter Woit, Witten must be asking himself the question, who needs friends? More seriously, this is a useful statement of Dr Woit’s elitism problem. He thinks that Professor Witten tragically missed out on a Nobel Prize, despite his mathematical physics brilliance, by being born some decades too late. Duh. Doesn’t that prove him unnecessary? After all, he wasn’t needed. Physics did not go on hold for decades awaiting him. Others got the prizes for doing the physics. Maybe I’m just too stupid to understand true genius…

Like modern string theorists, Boscovich believed that forces are purely mathematical in nature and he argued that the stable sizes of various objects such as atoms correspond to the ranges of different parts of a unified force theory. The concept that the range of the strong nuclear force determines roughly the size of a nucleus would be a modern example of this concept, although he offered no real explanation for different forces such as gravity and electromagnetism, such as their very different strengths between two protons. Against Boscovich were Newton’s friend Fatio and his French student LeSage, who did not believe in a mathematical universe, but in mechanisms due to particle flying around in the vacuum. Various famous physicists like Maxwell and Kelvin in the Victorian era argued that particles flying around to cause forces by impacts and pressure, would heat the planets up by drag, and slow them down so they spiralled quickly into the sun. Feynman recounts LeSage’s mechanism for gravity and the arguments against it in both his Lectures on Physics and his 1964 lectures The Character of Physical Law (audio linked here). However, the problem is that quantum field theory does accurately predict today the experimentally verified Casimir force which is indeed caused by off-shell (off mass shell) field quanta pushing the plates together, somewhat akin to LeSage’s mechanism. The radiation in the vacuum which causes the Casimir force doesn’t slow down or heat up moving metal or other objects, and the Maxwell-Kelvin objections don’t apply to field quanta (off-shell radiations).

The Casimir force is produced because the metal plates exclude longer wavelengths from the space inbetween them, but you get the full spectrum of virtual radiation pushing against the plates from the opposing sides, so the net force pushes them together, “attraction”. Maxwell’s equations are formulated in terms of rank-1 (first order) gradients and curls of “field lines”, e.g. whereas general relativity is formulated in terms of rank-2 (second order) space curvatures or accelerations, so there is an artificial distinction between the two types of equations. Pauli and Fierz in 1939 argued that if gravitons are only exchanged between an two masses which attract, they have to be spin-2. Electromagnetism can be mediated by spin-1 bosons with 4 polarizations to account for attraction and repulsion. Thus, the myth of linking the rank of the tensor equation to the spin began: rank-1 Maxwell equations implied spin-1 field quanta (virtual photons), and rank-2 general relativity implied spin-2 field quanta (gravitons). However, the rank of the equation is purely a synthetic issue of whether you choose to express the field in terms of Faraday style imaginary “field lines” (which Maxwell chose), or measurable spacetime curvature induced accelerations (which Einstein used). It’s not a fundamental distinction, since you could rewrite Maxwell equations in terms of accelerations, making then rank-2.

Furthermore, Pauli and Fierz wrongly assumed that you can treat two masses as exchanging gravitons, and ignore the exchange of gravitons with all the other masses in the universe. This is easily shown wrong, because the mass of the rest of the universe is immensely larger than an apple and the Earth (say), and furthermore the exchanged gravitons which are being received by the two masses under consideration are converging inward from isotropically distributed distant masses (galaxy clusters etc) in all directions. When you include those contributions, the Pauli-Fierz argument for spin-2 gravitons is disproved, because, just as in the Casimir effect between two parallel metal plates, the repulsion due to the exchange of spin-1 gravitons between the particles in the apple and those in the Earth is trivial compared to the inward forces from graviton exchange on the other sides.

Hence, spin-1 gravitons do the job of pushing the apple down to the Earth, because that push is greater than the repulsion due to spin-1 gravitons being exchanged between the apple’s particles and those of the Earth! The Pauli-Fierz argument from 1939 in effect (when considering the path integral for exchanged field quanta, gravitons) claimed to rule spin-1 gravitons out, but this was based upon ignoring the inward push from gravitons exchanged with distant masses. Of course, if you can ignore the inward push, then spin-1 gravitons cause repulsion between masses, as which we observe as the cosmological acceleration of the universe. So the Pauli-Fierz argument is dumb twice over:

1. It fails to include the graviton exchange with every mass in the universe, yet there is no way to stop graviton exchange with every mass! Thus it falsely rejects spin-1 gravitons and argues wrongly for spin-2 gravitons in order that the field quanta exchanged between just two test masses will cause attraction! This doesn’t hhappen. If there were only two masses in the universe, they would repel as we see from the cosmological acceleration, which is important over distances approaching the horizon radius (so that masses further away are beyond the horizon radius of light-speed field influence, and have no effect, reducing the geometry to a simple two body exchange of gravitons, causing them to simply repel). This argument is usually offuscated further by the difference between the rank of the field equations. In fact, rank-1 equations are used in electromagnetism because of the field line concept which describes forces by simple diverging and curling Faraday field lines (rank-1 equations) whereas rank-2 equations are used in general relativity because

it does not describe the gravitational field in terms of field lines, but in terms of accelerative spacetime curvatures (rank-2 tensors). The obfuscation ignores the fact that the difference in rank is due to the field concept being used, and instead insists that the difference in rank is implied by the difference in spin, thus spin-1 for rank-1 and spin-2 for rank-2. This is a plain lie.

2. The second folly of the Pauli-Fierz argument is that in rejecting the spin-1 reality and postulate a false spin-2 graviton, it fails to predict the cosmological repulsion and thus acceleration between masses in the universe on large scales, where the distances between the masses approach their horizon radii, so each in effect has no or little further mass beyond it to push it towards the other. In this situation, the spin-1 graviton exchange causes the masses to repel apart with the cosmological acceleration predicted by this quantum gravity theory in 1996.

The bigger the mass of the Earth, the more shadowing and asymmetry of graviton forces on the top and bottom of the particles in the apple, so the apple is pushed down with greater force, thus it’s analogous to the mechanism of the Casimir force or LeSage.

The distinction between Newtonian and Einsteinian gravitation is two fold. First, there is the change from forces to spacetime curvature (acceleration) using the Ricci tensor and a very fiddled stress-energy tensor for the source of the field (which can’t represent real matter correctly as particles, using instead artifically averaged smooth distributions of mass energy throughout a volume of space), and secondly these two tensors could not be simply equated by Einstein without violating the conservation of mass-energy (the divergence of the stress energy tensor does not vanish), so Einstein had to complicate the field equation with a contraction term which compensates for the inability of the divergence of the stress-energy tensor to disappear. It is precisely this correction term for the conservation of mass-energy which makes the deflection of light equal to double that of a non-relativistic object like a bullet passing the sun. The reason is that all objects approaching the sun gain gravitational potential energy. In the case of a non-relativistic or slow moving bullet, this gained gravitational potential energy is used to do two things: (1) speed up the bullet, and (2) to deflect the direction of the bullet more towards the sun. A relativistic particle like a photon cannot speed up, so all of the gravitational potential energy it gains instead is used to deflect it, hence it deflects by twice as much as Newton’s law predicts.

“Many condensed matter systems are such that their collective excitations at low energies can be described by fields satisfying equations of motion formally indistinguishable from those of relativistic field theory. The finite speed of propagation of the disturbances in the effective fields (in the simplest models, the speed of sound) plays here the role of the speed of light in fundamental physics. However, these apparently relativistic fields are immersed in an external Newtonian world (the condensed matter system itself and the laboratory can be considered Newtonian, since all the velocities involved are much smaller than the velocity of light) which provides a privileged coordinate system and therefore seems to destroy the possibility of having a perfectly defined relativistic emergent world. In this essay we ask ourselves the following question: In a homogeneous condensed matter medium, is there a way for internal observers, dealing exclusively with the low-energy collective phenomena, to detect their state of uniform motion with respect to the medium? By proposing a thought experiment based on the construction of a Michelson-Morley interferometer made of quasi-particles, we show that a real Lorentz-FitzGerald contraction takes place, so that internal observers are unable to find out anything about their ‘absolute’ state of motion. Therefore, we also show that an effective but perfectly defined relativistic world can emerge in a fishbowl world situated inside a Newtonian (laboratory) system. This leads us to reflect on the various levels of description in physics, in particular regarding the quest towards a theory of quantum gravity….

“… Remarkably, all of relativity (at least, all of special relativity) could be taught as an effective theory by using only Newtonian language … In a way, the model we are discussing here could be seen as a variant of the old ether model. At the end of the 19th century, the ether assumption was so entrenched in the physical community that, even in the light of the null result of the Michelson-Morley experiment, nobody thought immediately about discarding it. Until the acceptance of special relativity, the best candidate to explain this null result was the Lorentz-FitzGerald contraction hypothesis… we consider our model of a relativistic world in a fishbowl, itself immersed in a Newtonian external world, as a source of reflection, as a Gedankenmodel. By no means are we suggesting that there is a world beyond our relativistic world describable in all its facets in Newtonian terms. Coming back to the contraction hypothesis of Lorentz and FitzGerald, it is generally considered to be ad hoc. However, this might have more to do with the caution of the authors, who themselves presented it as a hypothesis, than with the naturalness or not of the assumption… The ether theory had not been disproved, it merely became superfluous. Einstein realised that the knowledge of the elementary interactions of matter was not advanced enough to make any claim about the relation between the constitution of matter (the ‘molecular forces’), and a deeper layer of description (the ‘ether’) with certainty. Thus his formulation of special relativity was an advance within the given context, precisely because it avoided making any claim about the fundamental structure of matter, and limited itself to an effective macroscopic description.”

For more on this subject, see the earlier post linked here.

(This post includes some of the quantum gravity material from the previous post, omitting Distler’s false dogma of the Standard Model and the reason why electroweak symmetry breaking was rejected by Feynman as being an ad hoc fiddle. Different approaches are readable to different people, so we will continue to try to edit material in different ways, for the benefit of the widest possible readership.)

Update (17 July 2010):

New experimental evidence has dramatically reduced the statistical uncertainty on the mass of the heaviest quark, the top or truth: M(top) = 173.1 +/- 0.7 (stat) +/- 0.9 (syst) GeV, a measurement with a total uncertainty of only 1.3 GeV, or 0.75%. See the earlier post linked here for our correlation of quark masses in the quantum gravity modification to the Standard Model: our correlation found that the top quark mass is (8/3)Pi4MZ_0*alpha = 173 GeV, where the presence of the Z_0 mass is due to its role in replacing the Higgs field as the miring particle in the vacuum which produces mass in this quantum gravity theory.

More information here.

Dark energy detection paper reviewed by Sabine at Backreaction blog

Martin L. Perl and Holger Mueller have written an arXiv paper on “Exploring the possibility of detecting dark energy in a terrestrial experiment using atom interferometry”:

“The majority of astronomers and physicists accept the reality of dark energy but also believe it can only be studied indirectly through observation of the motions of galaxies. This paper opens the experimental question of whether it is possible to directly detect dark energy on earth using atom interferometry through a force hypothetically caused by a gradient in the dark energy density. Our proposed experimental design is outlined. The possibility of detecting other weak fields is briefly discussed.”

I objected to Sabine’s claim:

“Due to its peculiar properties, as well as the specific value of its measured density, the existence of dark energy is one of the biggest puzzles in theoretical physics today. While it is easily possible to incorporate it into our models as a source for gravity, its microscopic origin is not understood.”

We predicted dark energy in 1996, two years before it was measured to have the predicted value. Sabine has kept her cool, probably with a lot of restraint, in the comments section, instead of instantly denouncing the work as being subhuman or whatever, although she has objected to replies to her questions. In other words, when she asks a question, it’s not in the hope of getting the answer. Then Sabine asks me not to reply but raises further questions… It’s like a political debate. Someone states a fact. Someone then “raises a question” about the fact when the debate has run out of time or further discussion is stopped, so no reply can be given. Then the onlooking journalists must report truthfully that “questions were raised but not answered”:

nige said…

From a June 10, 2009 New Scientist article:

‘”We don’t know that gravity is strictly an attractive force,” cautions Paul Wesson of the University of Waterloo in Ontario, Canada. He points to the “dark energy” that seems to be accelerating the expansion of the universe, and suggests it may indicate that gravity can work both ways. Some physicists speculate that dark energy could be a repulsive gravitational force that only acts over large scales. “There is precedent for such behaviour in a fundamental force,” Wesson says. “The strong nuclear force is attractive at some distances and repulsive at others.’

So if you drop an apple, you detect “dark energy” which causes it to accelerate downwards; the mechanism for this dual role of the gravitational field was predicted and published in 1996 including the a ~ Hv cosmological acceleration of the universe and thus the “effective” value of the small positive CC, two years before it was observed. (Feel free to delete this comment or make a politically-correct caustic remark about crackpotism.)

Bee said…

Well, I agree with the first part of your comment, though I find it very misleading to talk about dark energy as a repulsive gravitational force. It’s not the same thing. You want to talk about repulsive gravity, you’ll first have to come up with a consistent theory that realizes this. I have no clue what the second part of your comment is supposed to mean. You trying to say you can detect the CC in watching a falling apple? Arguably, if the CC was locally present it would slightly influence the fall of your apple, but I can’t see how this would even be remotely detectable. You run into the same problem as Perl and Mueller, it’s way too small compared to the gravitational forces we deal with on Earth/on small distances. Best,

B.

9:27 AM, July 28, 2010

Hi Bee,

Pauli and Fierz in 1939 found that for two masses to attract by exchange of gravitons, the gravitons need to be spin-2:

‘In the particular case of spin 2, rest-mass zero, the equations agree in the force-free case with Einstein’s equations for gravitational waves in general relativity in first approximation …’

– Markus Fierz and Wolfgang Pauli, ‘On relativistic wave equations for particles of arbitrary spin in an electromagnetic field’, Proc. Roy. Soc. London., v. A173, pp. 211-232 (1939).

What I predicted in 1996 is that two masses don’t attract; they repel. Hence spin-1 gravitons. This predicts the cosmological acceleration accurately when you use the same model for gravitation.

So why does an apple appear to be attracted to the Earth, instead of being repelled?

Answer: the exchange of spin-1 gravitons between apple and Earth does cause a repulsion.

However, that repulsion is trivial and is simply not significant compared to the effects of the exchange of gravitons with distant masses, with gravitons converging inward fro great masses (galaxy clusters etc) isotropically distributed all around us.

The Pauli-Fierz theory of spin-2 gravitons necessitates hand-wavingly ignoring the exchange of gravitons with all the mass in the universe, and just pretending that the only exchange of gravitons is between the two masses you are concentrating on. It’s the old reductionist fallacy; quantum gravity requires holistic thinking in that there is no mechanism suggested by anyone to stop gravitons being exchanged between all of the masses in the universe.

Just like the Casimir force where virtual radiation pushes two conducting metal plates together in a vacuum, gravity can operate with spin-1 field quanta!

Where masses are so distant from one another that they begin to approach each other’s cosmological “horizon” distance, the gravitational mechanism of converging gravitons from greater distances no longer applies to the geometry of the situation, so repulsion predominates. Hence “dark energy” causing accelerating expansion!

Kind regards,

Nigel Cook

Bee said…

For all I know, just from the attraction you get that for charges of equal sign the spin has to be integer. (Gravity is not universally attractive. It is attractive between positive masses. However, we haven’t seen any negative masses and they are generically problematic.) You want spin 2 because it couples universally. Masses around us *do* affect the gravitational attraction between bodies on Earth, you can measure it, you can even see it. But point is that in most situations this influence is luckily negligible. (You don’t have to know today’s motion of Venus to get on the highway.) In any case, if you’d consider an isotropic distribution the effect would null out. (There’s no gravitational field inside a sphere.) We have plenty of observational evidence that Einstein’s spin-2 description of gravity is extremely accurate. Instead of making new predictions, maybe better first reproduce the old ones. Best,

B.

11:06 AM, July 28, 2010

“Masses around us *do* affect the gravitational attraction between bodies on Earth, you can measure it, you can even see it. But point is that in most situations this influence is luckily negligible.”

Take F=ma. Stick in the observed Hubble mass of the accelerating universe and the observed cosmological acceleration of the universe, ~6 x 10^(-10) ms^(-2). This gives something like 10^43 Newtons radial outward force. Newton’s 3rd law then suggests equal and opposite reaction force. This 10^43 Newtons inward is hardly negligible, and my paper in 1996 showed that it predicts gravitation (e.g. the value of G) correctly within the input errors in the data, with particles introducing asymmetry and being pushed together. This was discussed by Feynman in 1964: it not only predicts gravity but also explains the radial contraction effect of general relativity which causes the departures from Newtonian gravitation. Feynman raised the point that it then in 1964 predicted nothing observed. The cosmological acceleration was discovered in 1998. Feynman also pointed out that on-shell particles would slow down moving objects, so the original LeSage version of this gravity idea is wrong. However, there is no true link between rank-2 tensors (general relativity) and spin-2 gravitons and between rank-1 tensors (Maxwell) and spin-1 field quanta (photons), that proves spin-2 gravitons in the Pauli-Fierz paper as often misleadingly claimed. The rank is simply the order of the differential equations. General relativity describes gravitational fields in terms of curved spacetime, i.e. acceleration (rank-2 tensors), while Maxwell’s equations describe the field in terms of diverging and curling spatial field lines (rank-1 tensors). If you wrote a rank-2 tensor for Coulomb’s law (which isn’t needed but is possible), you would then have a rank-2 tensor equation for a spin-1 field. Thus, there is no real rank-spin connection.

Cheers,

Nigel

Bee said…

It doesn’t give any outward force, see above. It’s one of the things that you can wreck with modifications of GR, has a name, but forgot, sorry. The FRW solution doesn’t apply on sub-galactic scales anyway. Besides that, may I kindly ask you to either come back to topic of this post or drop your elaborations. Best,

B.

11:46 AM, July 28, 2010

nige said…

“It doesn’t give any outward force, see above.”

I don’t see anything above about this. The cosmological acceleration outward is on the order a~Hc. The simplest way to approach this to to look for outward force and the inward reaction force.

“The FRW solution doesn’t apply on sub-galactic scales anyway.”

The FRW solution is not referred to. Curvature applies on all scales and the contraction of Earth’s radius (see Feynman’s lectures) is (1/3)MG/c^2 = 1.5 mm.

“Besides that, may I kindly ask you to either come back to topic of this post or drop your elaborations.”

I thought the post was about detection of dark energy. You can predict the acceleration of the universe and thus dark energy from the fall of an apple!

However, thanks for being kind. I won’t make any more comments on this post as I can see you’re bored by this approach which predicted the acceleration accurately in 1996.

Cheers,

Nigel

Bee said…

I said above the gravitational force inside a spherical cavity is zero. If your theory doesn’t have this feature, you have a grave problem with cosmological perturbations and structure formation.

“The FRW solution is not referred to.”

Then what is H?

This post is about the paper by Perl and Mueller, read first sentence. Best,

B.

“Then what is H?”

Hubble’s parameter, as in v = HR, which Hubble discovered observationally in 1929.

It’s observational and is not predicted by general relativity, in which metrics can be found to represent anything.

Gravitational force inside a spherical cavity will be zero: in the middle there is no asymmetry, and thus no gravity (which is an asymmetry in graviton exchange). Away from the middle you just have Newton’s “hollow shell” theorem, where a proximity of an observer to the shell at one side means that most of the mass is on the opposite side, with the inverse-square law compensating and eliminating gravitational force. For weak field (little contraction) this [quantum gravity] gives Newton’s law with all the usual implications.

Best,

anon.

Bee said…

Nige,

My question for H was rhetoric. It’s a parameter in the FRW metric, yet you say you make no reference to the FRW metric. It doesn’t fit. How does one compute the gravitational force: you compute the gravitational field. There is, on subgalactic scales, no H in the solution. Galaxies don’t expand. It’s the space between them that does. Consequently, there’s no H in your field and I don’t know what you’re even talking about. Correct, the gravitational field inside a sphere is zero. So why care what’s outside the sphere? Last warning: your next comment is not about the Mueller & Perl paper, and it goes into the garbage bin. Best,

B.

3:25 AM, July 29, 2010

“It’s a parameter in the FRW metric, yet you say you make no reference to the FRW metric. It doesn’t fit.”

Unlike my prediction of the acceleration of the universe published in 1996, the FRW metric failed to predict the acceleration of the universe, because general relativity fails to include the dynamics of quantum. The FRW metric isn’t correct physics, it doesn’t explain the CC. Forget it.

“Galaxies don’t expand. It’s the space between them that does.”

Gravity binds galaxies together. What you are saying is being said as if you think it disproves quantum gravity predictions made in 1996, but it doesn’t have any relevance.

“Correct, the gravitational field inside a sphere is zero. So why care what’s outside the sphere?”

Because the apple is outside the sphere and it’s exchanging gravitons with the entire the surrounding universe, so the gravitons exchanged from that mass converge inwards.

“Last warning: your next comment is not about the Mueller & Perl paper, and it goes into the garbage bin.”