Path integrals

Richard P. Feynman, QED, Penguin, 1990, pp. 55-6, and 84:

‘I would like to put the uncertainty principle in its historical place: when the revolutionary ideas of quantum physics were first coming out, people still tried to understand them in terms of old-fashioned ideas … But at a certain point the old fashioned ideas would begin to fail, so a warning was developed that said, in effect, “Your old-fashioned ideas are no damn good when …”. If you get rid of all the old-fashioned ideas and instead use the ideas that I’m explaining in these lectures – adding arrows [arrows = path phase amplitudes in the path integral, eiS -> cos S, for the real phase component] for all the ways an event can happen – there is no need for an uncertainty principle! … on a small scale, such as inside an atom, the space is so small that there is no main path, no “orbit”; there are all sorts of ways the electron could go, each with an amplitude. The phenomenon of interference [by field quanta] becomes very important …’

“path integrals” is the underlying physical dynamics of paths in 2nd quantization, i.e. the Feynman pictorial interpretation of the ∫exp(iS) dx^n, where S = ∫Ldt. Feynman’s diagrams drop complex space, so this becomes ∫cos S dx^3 which is even simpler when you plot it on a diagram; please see Feynman’s Figure 24 here: https://faculty.washington.edu/seattle/physics441/feynman-QED/qed2.pdf

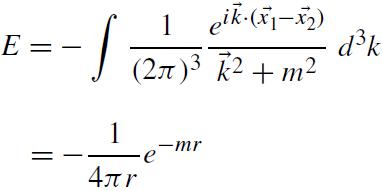

“Path integral” is really not an integral, but a discrete summation: ∑ cos S, over all paths that can connect the point of path origin to the point of detection. What’s really important for making calculations in QFT is the perturbative expansion’s “propagator”, usually considered the Fourier transform of the Yukawa-Coulomb potential energy, U = [exp(-mr)]/(4πr). In Feynman’s simplified (and physically accurate) “real (non-complex) space path summation” we don’t need the Fourier transform, just the Laplace transform which gives the following “fun” calculation (we evaulate the integral over radial distances 0 to infinity):

propagator, V = ∫U [exp(-kr)] d^3 r

= ∫U [exp(-kr)] (4πr^2) dr

= ∫ { [exp(-mr)]/(4πr) } [exp(-kr)] (4πr^2) dr

= ∫ r [exp{-(k + m)r)] dr

= 1/(k + m)^2 = the “propagator”

And that’s it. To calculate each Feynman diagram’s contribution to the total amplitude, you simply multiply the “propagator” derived above, 1/(k + m)^2, by the number of internal lines in the Feynman diagram, and also multiply the number of vertices by the force coupling constant. The perturbative expansion to the so-called “path integral” (which I’d reformulate as the summation ∫exp(iS) dx^n -> ∑ cos S, following the older not younger version of Feynman) then becomes simple.

There shouldn’t be any calculus in quantum field theory, because the whole point is that you are replacing continuous fields with a series of point interactions. Like pollen fragments undergoing Brownian motion due to air molecule bombardment, the electron is undergoes a series of interactions with Coulomb field quanta (“virtual” photons) which deflect it. A better example of the failure of calculus in the real world of discrete interactions in the 1950s was the fallout speed error from the H-bomb’s 100,000 foot mushroom cloud: calculations with Stokes law and average air viscosity massively underestimates the fallout descent rate of 5 micron diameter particles at such high altitudes. The standard theory of a continuously acting force gives you a “terminal velocity” which doesn’t actually exist: the fallout particle instead plummets like an accelerating apple in a vacuum in free-fall, apart from occasional discrete collisions (impacts) with air molecules in that low-density air. It turns out that the continous (calculus based) approximation is only valid for particles large enough, in air of density high enough, that the rate of interaction between the dust and the air molecules bombardment is high enough to prevent significant acceleration between impacts! There is every reason to think that this kind of error is also applicable to quantum field theory.

There’s a further application of the fallout analogy of use in understanding the path integral, namely Schuert’s method of mapping out – and adding up the contributions to – path integrals for particles falling through a wind shear structure which carries them in different directions and with different speeds at different altitudes, in order to work out the “hot line” of maximum fallout on the ground. This has a certain analogy to the use of path integrals to work out the path of least action:

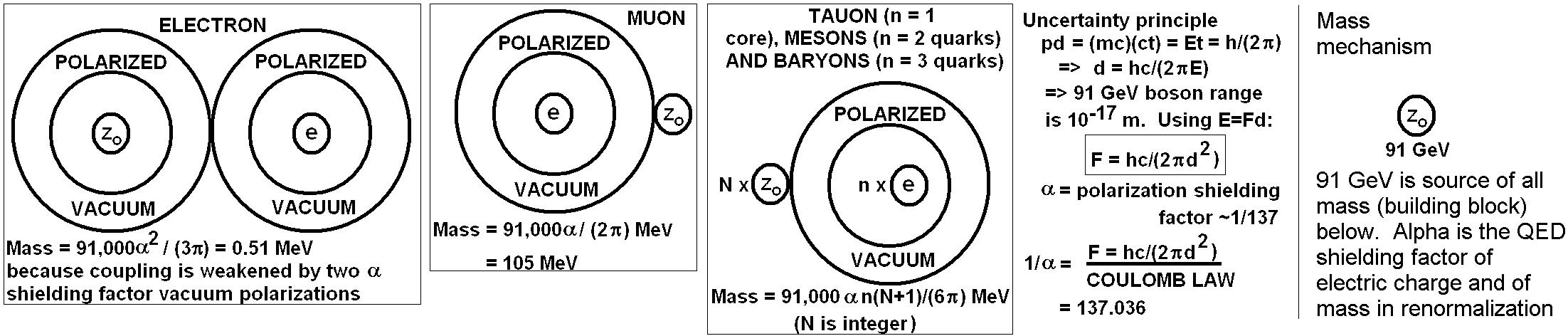

“There are loads of other “clues”. One massive issue which again is totally ignored by the mainstream (including PW) and by popular science writers is that the quantum electrodynamic propagator has the form: 1/(m + k)^2, where m is the virtual massive (short ranged) electromagnetic field quanta (e.g. the virtual electrons and positrons that contribute vacuum polarization shielding and other effects between IR and UV cutoffs), and k is the term for the massless (infinite range) classical Coulomb field quanta (virtual photons which cause all electromagnetic interactions at energies lower than the IR cutoff, i.e. below collision energies of about 1 MeV, which is the minimum energy needed for pair production of electrons and positrons).”

“The point is, you have two separate contributions to the mass of a particle from such a propagator: k gives you the rest mass, while m gives you additional mass due to the running coupling for collision energy >1MeV. (See for instance fig 1 in https://vixra.org/pdf/1408.0151v1.pdf .)”

“The fact that you can separate the Coulomb propagator’s classical mass of a fermion at low energy (<1 MeV) from the increased mass due to the running coupling at higher energy, proves that there’s a physical mechanism for particle masses in general: the virtual fermions must contribute the increase in mass at high energy by vacuum polarization, which pulls them apart, taking Coulomb field energy and thus shielding the electric charge (the experimentally measured and proved running of the QED coupling with energy). In being polarized the electric field, the virtual positron and electron pair (or muon or tauon or whatever) soaks up real electric field energy E in addition to Heisenberg’s borrowed vacuum energy (h-bar/t). So the virtual particles must have a total energy E + (h-bar/t), which allows them to turn the energy they have have absorbed (in being polarized) into mass. This understanding of the two terms in the propagator, m and k, therefore gives you a very simple mechanism basis for predicting all particle masses, m, which shows how the mass gap originates from treating the propagator as a simple physical model of real phenomena…”

Basically, Woit has a useful clue, but is sailing in the wrong direction due to an elitist bias that fancy mathematics is definitely the right way to go – it helps in some ways but you need to also correct errors in the standard model and in some areas like the path integral, the direction should be away from calculus and towards discrete interactions. We should look at what’s physically occurring and take the perturbative expansion as reality, seeking to relegate calculus to just an approximation for high rates of interactions, but of much use for understanding mechanisms, which are discrete summations, not continuous variables in integrals.

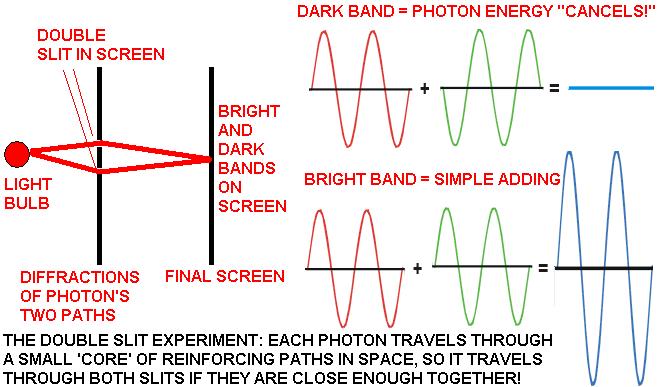

Above: the double slit experiment is as Feynman stated the ‘central paradox of quantum mechanics’. Every single photon gets diffracted by both of two nearby slits in a screen because photon energy doesn’t travel along a single path, but instead, as Feynman states, it travels along multiple paths, most of which normally cancel out to create the illusion that light only travels along the path of least time (where action is minimized), so the double slit and a few other situations are the rare special cases that show up the true nature of light photons as individually traveling along spatially extended paths:

‘Light … uses a small core of nearby space. (In the same way, a mirror has to have enough size to reflect normally: if the mirror is too small for the core of nearby paths, the light scatters in many directions, no matter where you put the mirror.)’ – R. P. Feynman, QED, Penguin, 1990, page 54.

If there are two effective paths that deliver energy to the screen, path 1 and path 2 (as in the double slit experiment with a single photon) then the square of the resultant of the amplitudes for the two paths, A1 and A2, respectively, will be given by |A1 + A2|2, where the squaring the modulus of the sum of the amplitudes is Born’s interpretation of the probability density relationship to the wavefunction. Similarly, in electromagnetism the energy density is proportional to the square of the electric field intensity, which represents a relative wavefunction amplitude for electromagnetic radiation (there’s no mystery to what the “wavefunction” represents for light waves).

Feynman’s genius in discovering path integrals was the amazing intuition it took to realize that Dirac’s ‘propagator’ (derived by Dirac in 1933 from the time-dependent Schroedinger equation’s result for the probability of a path: e–iHT/h-bar where H is the Hamiltonian for a path, i.e. simply the kinetic energy if dealing with a free particle, and T is simply time), namely eiS/h-bar where S is action, could be used to represent each path without the need for squaring the modulus of the amplitude! The complex number in the exponent does it all for you, so you just need to integrate eiS/h-bar for all paths contributing energy that affects the overall amplitude. Hence, the relative probability for any given pair of two paths in the double slit experiment is simply: (|A1 + A2|2) = B|[eiS(1)/h-bar + eiS(2)/h-bar]|2,

where B is a constant of proportionality (easily determined by adding up all paths and normalizing the summation to a total probability of 1, since energy is conserved and the photon definitely ends up somewhere, so the sum of all possible path amplitudes must be equal to a probability of exactly 1 of finding the photon!). Dirac had taken the Hamiltonian amplitude e–iHT/h-bar and derived the more fundamental lagrangian amplitude for action S, i.e. eiS/h-bar. Dirac however restricted his work on this problem to merely the classical action S, whereas Feynman had the genius to extend it to sum over the actions S for all paths, not just the classical action! However notice that this summation over all paths has never, ever, ever been proved to require a summation of any curved paths, where there is no mechanism for such curvature in quantum fields. Curved geodesics in general relativity are merely the results of using differential geometry with a necessarily false smoothed-out source term tensor Tab to deliberately and artificially give rise to a smooth curvature! In place of the factually proved discontinuous distribution of particles of matter and energy (photons, etc.) which give rise to all gravitational fields, the stress-energy-momentum tensor Tab in the field equation of general relativity uses an artifically smoothed-out averaged distribution, with the real world particulate field discontinuities falsely eliminated! E.g., all of the particles of matter and energy are just ignored and replaced by a totally fictitious ‘perfect fluid’ continuum in general relativity: this false source field continuum then gives rise to the equally unphysical curved spacetime continuum because it is equated to the Ricci curvature tensor minus a contraction term for conservation of mass-energy.

So, instead of calculating the gravitational fields from a large number of discontinuous particles, general relativity averages out the mass per unit volume and uses the average, giving rise to a false model of gravity which is only approximately valid for certain conditions where the statistical number of gravitons is large enough to average out and appear like a classical field! General relativity is therefore not ‘only missing’ a vital ingredient (quantum fields), but it is entirely a false framework to start off with because of the mass-energy-momentum tensor which doesn’t describe real particulate gravity-causing fields, but only represents at best artificial approximations to such fields which are roughly applicable for large masses.

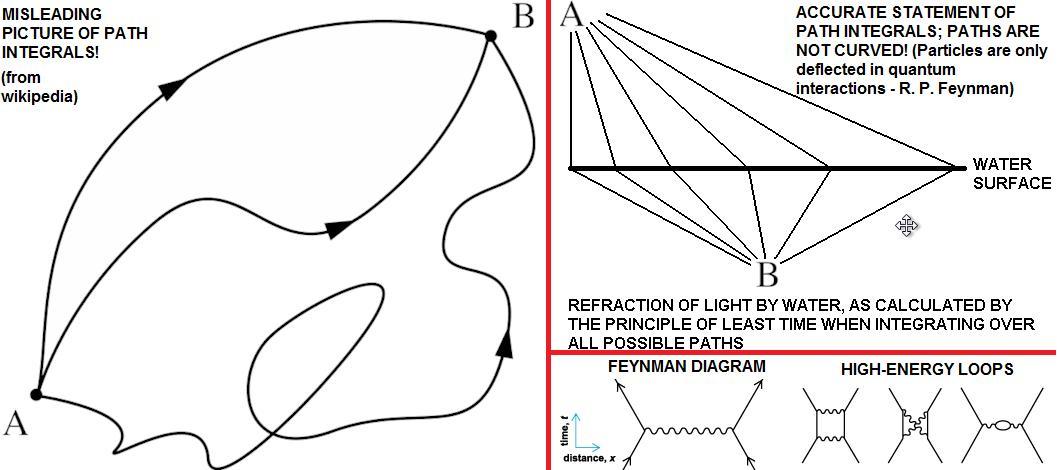

Anyone with a knowledge of calculus and more than one brain cell knows that discontinuities cause problems to differential equations; vertical steps produce infinities when differentiated to find gradients! There is actually no mechanism for a smooth curvature of geodesics in quantum field theory, where nobody has ever proved that particles (including virtual particles and cancelled particle paths in path integrals!) don’t travel according to Newton’s 1st law of motion (straight lines in the absence of quantum interactions which impart forces!). Crackpottery is often introduced into mainstream accounts of path integrals by false claims that curved particle paths are ‘permitted’ by the path integral formulation, but that these paths are cancelled out.

This is false, and the reason for it is to introduce false mythology into physics. There is no evidence for it, there is no checkable prediction from it, and it is pseudoscience. It is a lie to claim that physics requires curved paths of particles to be included in path integrals. It doesn’t. See Feynman’s treatment of the refraction of light using graphical illustrations of path integrals (without any equations at all!) in his 1985 book QED: the you don’t need wiggly curved paths to be included. All you need to include are straight line paths from light bulb to the water surface, and then after a discrete deflection at the water surface, another straight line path in the water to the receiver. The differing paths consist solely of straight lines with varying angles of deflection at the water surface! You don’t need to include any curved lines.

Above: Professor Zee lies in Chapter I.2 of his book Quantum field theory in a nutshell (Princeton, 2003) that if the screen with two slits in the double-slit experiment has more and more holes drilled into it so that it eventually disappears altogether, you get chaotic path integrals because – so he falsely claims on page 9 – the photons will still diffract just as if they are going through small slits! Zee is so stupid that he ignores the whole mechanism for diffraction by a slit: the photon interacts with the electromagnetic fields of the electrons in the material along the edge of a slit, and is thus diffracted. When you remove the slits altogether, there are no edges left to cause photons to diffract, so contrary to Zee, photons don’t go loopy in empty space as if they are being diffracted by an infinite number of slits! Think about the refraction of light when entering glass: the electromagnetic fields in the photon interact with those of the electrons in the glass, and the result is a change in the velocity of light, causing refraction of light by glass. The edge of a slit has electrons in it, and the electromagnetic fields of those electrons interact with the nearby photon, causing it to diffract. Drill lots of holes and yes you get more complex interferences, but if you remove the material altogether you suddenly have no edges of slits left to cause diffraction, so the chaos disappears and things become simple!

Not only is he so gullible and mad that Zee ignores this obvious physical mechanism, but he falsely attributes his crank analysis to Feynman, who did not author it! (See Feynman’s book, QED, Princeton, 1985 for the facts Zee ignores!) Zee is just a liar and a fraudster: he is not just a charlatan but he draws a salary from teaching lies to people and he sells books with lies in them, which makes him a quack. Quack science often becomes mainstream: Hitler’s genocide was based on quack genetics, for example. So we need to catch these perpetrators and prosecute them for fraud, and convict them for willful deception for profit. Zee also makes some purely physical errors about particle spins, and promotes them with false propaganda. E.g., his path integral for quantum gravity presupposes spin-2 gravitons and then tries to justify this lie by excluding all the mass in the universe except for two small test masses. Obviously for just two masses, you would indeed need spin-2 graviton exchange to pull them together. But he does not state that if you include all the other masses in the universe (all carry gravitational charge, so there is no way to prevent them from exchanging gravitons with your two little test masses), you don’t need spin-2 gravitons anymore because you can predict gravity with spin-1 gravitons, allowing you to incorporate gravity into the revised Standard Model and have the final theory! But that is just a mistake by Zee, unlike his deception over what Feynman’s path integrals say about the double slit experiment, so it isn’t necessarily a fraud, just plain incompetence which suggests Zee should be sacked from his job for ignorance in the basics of physics. However, I’d like to see Witten kicked out of the Institute of Adcanced Study in Princeton for his massive lie:

‘String theory has the remarkable property of predicting gravity.’ – Dr Edward Witten, M-theory originator, ‘Reflections on the Fate of Spacetime’, Physics Today, April 1996.

This lie damaged my chances of getting my discovery published in Classical and Quantum Gravity, so it has held back scientific progress. I know Witten would possibly argue that my work would have been rejected anyway, but there is such a thing as the straw that breaks the camel’s back; such lies don’t help physics.

In high energy physics above the IR cutoff you get pair production, as explained in detail in the previous post. This causes ‘loops’ in which bosonic field quanta knock pairs of virtual fermions free from the unobservable ground state of the vacuum/ether, which soon annihilate back into radiation again in a ‘loop’ of repeated virtual fermion creation and annihilation. Although it is convenient to depict this process by a circular loop on a spacetime Feynman diagram, even this situation (which is irrelevant below the 1 MeV IR cutoff for all low-energy physics anyway) is not physically composed of curved particle All apparent cases of ‘curvature’ are merely a lot of straight lines joined up with particle interactions occurring at the vertices! Starlight deflected by the sun is deflected in a series of quantum graviton interactions in the vacuum, and the overall result can be statistically modelled to a good approximation by ‘curvature’ but such curvature remains just an approximation. There is no curved continuum spacetime, there is quantum spacetime. This is even clear when you look at the lies needed in general relativity: as soon as you introduce the properly quantized Tab energy-momentum-stress tensor as the source of the gravitational field, the theory falls to pieces because the Ricci tensor only represents a continuously variable curved geodesic, not a straight line with discontinuities.

The whole of general relativity is just a classical approximation that usefully allows calculations to be made (albeit with a loss of physical intuition for the nature of the real world) incorporating the conservation of field energy into classical gravitation. It’s a lie to presume that general relativity, or any theory representing discontinuous fields as continuously variables in differential calculus, is a physically correct model. Such calculations are fairly complex approximations to the awesomely simple nature of the physical world, which doesn’t use the calculus.

With Feynman’s innovation, any problem in quantum mechanics generally can be evaluated by integrating the Dirac propagator over all path actions, thus instead of having to follow Born and add up the squares of the moduli of amplitudes for each path, we just instead add up a linear summation of eiS(n)/h-bar terms, which is much easier and quicker (even a bright two year old can do it without making a mistake on a calculator). There is no mathematics beyond the trick of summing the amplitudes in such a way that they add up in a physically logical simple way without negative probabilities! For large numbers of paths, we can sum using calculus, by integrating eiS(n)/h-bar for an infinite number n of possible geometric paths with differing actions S(n). (This integration may be mathematically hard, and may lead to infinities and problems in some cases, but that’s a human mathematical problem of using the calculus, it’s not a proof that nature is complex! Duh!) Just so that readers who don’t understand quantum field theory can see what we’re doing, S is action: action is the integral of the lagrangian over time, and the lagrangian for a free particle in a field is simply the difference between the kinetic energy, E = (1/2)mv2 for non-relativistic situations, and the potential energy it has from the field it is immersed in. If a free massive particle has no potential energy and only kinetic energy, then the lagrangian is just the kinetic energy, (1/2) mv2. Integrate that over time and the result is the action, S. The amplitude for the path integral just requires the action S and Planck’s constant, h. The bar through h (i.e. h-bar) signifies h divided into twice Pi, a result of the geometry of rotational symmetry. There’s absolutely no complex mathematics whatsoever, no stringiness whatsoever, within nature; instead it is beautifully simple and factual. It’s really important for me to stress that Feynman was not, definitely not, merely trying to solve the problem of the infinite momenta of field quanta at close to the middle of an electron, and other quantum field theory problems with path integrals by imposing cutoffs for infrared and ultraviolet divergences (i.e. renormalization) in his theory. That is a lie, spread by liars in the mainstream who believe in extradimensional crap. Yes, Feynman did solve problems with renormalization, but what is being suppressed is that that his innovation is not a mere abstract addition to the existing theory of quantum mechanics. It’s a revolution which replaces Bohring physics of multiverse speculations and other nonsense with facts, as you can see by reading the key paper by Feynman which was inevitably rejected by the Physical Review (see page 2 of 0004090v1; due to egotistical cranky ‘peer’ reviewers who worship false dogma and abhore factual physics, the Physical Review has regularly acted as a typical pseudoscience propaganda journal which believes that religious lobbying is a substitute for hard facts from experimental work on quantum gravity), before being published in Reviews of Modern Physics, vol. 20 (1948), p. 367:

‘This paper will describe what is essentially a third formulation of nonrelativistic quantum theory [Schroedinger’s wave equation and Heisenberg’s matrix mechanics being the first two attempts, which both generate nonsense ‘interpretations’]. This formulation was suggested by some of Dirac’s remarks concerning the relation of classical action to quantum mechanics. A probability amplitude is associated with an entire motion of a particle as a function of time, rather than simply with a position of the particle at a particular time.

‘The formulation is mathematically equivalent to the more usual formulations. … there are problems for which the new point of view offers a distinct advantage. …’

Wow, what an understatement! I’m not alone in supporting Feynman’s case against the crackpot, backward mainstream which is still stuck in 1927 with obsolete physics and hasn’t grasped path integrals at all. E.g., Richard MacKenzie clearly supports what I’m saying about Feynman where he writes in his paper Path Integral Methods and Applications, pages 2-13:

‘… I believe that path integrals would be a very worthwhile contribution to our understanding of quantum mechanics. Firstly, they provide a physically extremely appealing and intuitive way of viewing quantum mechanics: anyone who can understand Young’s double slit experiment in optics should be able to understand the underlying ideas behind path integrals. Secondly, the classical limit of quantum mechanics can be understood in a particularly clean way via path integrals. … for fixed h-bar, paths near the classical path will on average interfere constructively (small phase difference) whereas for random paths the interference will be on average destructive. … we conclude that if the problem is classical (action >> h-bar), the most important contribution to the path integral comes from the region around the path which extremizes the path integral. In other words, the article’s motion is governed by the principle that the action is stationary. This, of course, is none other than the Principle of Least Action from which the Euler-Lagrange equations of classical mechanics are derived.’

So far so good, but I must point out that MacKenzie goes on to make a terrible error in his analysis of the Aharonov-Bohm effect, where a shielded box containing a magnetic field is placed between the two slits in the double slit experiment, and the photon interference pattern is affected by the magnetic field in the box. (This experiment was first done by Chambers in 1960.) The fatal mainstream error MacKenzie makes is the implicit assumption that the ‘shield’ which eliminates the observable magnetic field actually stops that magnetic field instead of merely cancelling it by superposition! Magnetic fields work by polarization. Little magnets such as fundamental spinning charges align against an external field in such a way as to oppose and partially ‘cancel’ that field: but this cancellation is a superposition of two fields, not the elimination of a field. Think simply: if you put a child on each end of a see-saw, it may balance, but that doesn’t mean you have cancelled out all the forces. You have only cancelled out some of the forces: you have ensured that the forces balance but there is still a force on the fulcrum that isn’t ‘cancelled out’. Similarly, if you have $1000 credit in one bank account and a debt of $1000 in another, you aren’t free from debt unless you transfer the money across.

What happens with magnetic fields is any material is full of magnetic fields because all fundamental particles have have electric charge and spin, but normally the random orientations or the paired up spins (adjacent electrons in an atom are paired with opposite spins under the Pauli exclusion principle) mean that normally the magnetism cancels out. Only when you have an asymmetry, aligning more of the spins one way than the opposite way, do you see the magnetic field. In the absence of alignment, the fields cancel by superposition, but the energy is still there in the field (energy is conserved). Therefore, in the Aharon-Bohm effect, the influence of the ‘shielded’ magnetic field on the interference pattern isn’t ‘magical’ or unexpected. The magnetic fields of the photon are affected by the energy density of the ‘cancelled’ magnetic fields, just as light slows down in a block of glass due to the energy density of the electromagnetic fields from the charged matter making up glass!

All of the mainstream ‘physicists’ (quacks) I’ve spoken to believe wrongly that because an ‘uncharged’ block of glass contains as many protons as electrons and hence has a net electric charge of zero, the electric fields ‘don’t exist’ anymore there, just as they claim the magnetic fields ‘don’t exist’ in the Aharonov-Bohm effect. They are so far gone into mystical eastern entanglement quackery that they they just ignore anomalies and become abusive when disproved time after time, and of course they get still more and more angry when you predict gravity factually and all the related predictions from the corrected physics. They are all totally insane, they are bad losers, they hate real physics, they hate the way the world really is!

This is essential to the checkable aspects of quantum gravity, i.e., low energy quantum gravity stuff like predicting the gravity force coupling parameter G, because at low energy graviton fields will carry a very low energy density (gravity is 1040 times weaker than electromagnetism at low energy, everyday physics). Therefore, at low energy, we can ignore the effects of graviton emission from the energy of the gravitational fields (because they are so weak at low energy) which ensures that the path integrals for quantum gravity will be similar to those of electromagnetism for low energy physics, where the checkable predictions of quantum gravity will be found. Who – apart from nutty string theorists – cares about the uncheckable speculations of Planck scale quantum gravity? If we first get a quantum gravity theory that makes correct checkable predictions at low energy, then we will be in a position to make confident extrapolations from that particular theory to higher energies. We can’t have that confidence if we start with speculations of high energy that can’t be checked! Duh! Get a grip on reality, all you string theorists and fellow-travellers in the media!

This makes quantum gravity path integrals very simple for low energy, like electromagnetism. So let’s deal with electromagnetism first, then move on to quantum gravity.

Feynman’s explains that all light sources radiate photons in all directions, along all paths, but most of those cancel out due to destructive interference. If you throw a stone at an apple, the apple won’t move significantly if someone on the other side of the apple does the same thing with a similar stone! The two impacts will cancel out, apart from a compression of the apple! In other words, there are natural situations where exchange radiation causes destructive interference, and the nature of light is exactly this situation.

The amplitudes of the paths near the classical path reinforce each other because their phase factors, representing the relative amplitude of a particular path, exp(-iHT) = exp(iS) where H is the Hamiltonian (kinetic energy in the case of a free particle), and S is the action for the particular path measured in quantum action units of h-bar (action S is the integral of the Lagrangian field equation over time for a given path).

Because you have to integrate the phase factor exp(iS) over all paths to obtain the resultant overall amplitude, clearly radiation is being exchanged over all paths, but is being cancelled over most of the paths somehow. The phase factor equation models this as interferences without saying physically what process causes the interferences.

Thus, in Feynman’s path integral explanation in his 1985 book QED, an electron when it radiates actually sends out radiation in all directions, along all possible paths, but most of this gets cancelled out because all of the other electrons in the universe around it are doing the same thing, so the radiation just gets exchanged, cancelling out in ‘real’ photon effects. (The electron doesn’t lose energy, because it gains as much by receiving such virtual radiation as it emits, so there is equilibrium). Any “real” photon accompanying this exchange of unobservable (virtual) radiation is then represented by a small core of uncancelled paths, where the phase factors tend to add together instead of cancelling out.

All electrons have centripetal acceleration from spin and so are always radiating, so there is an equilibrium of emission and reception established in the universe, called exchange radiation/vector bosons/gauge bosons, which can only be ’seen’ via force fields they produce; ‘real’ radiation simply occurs when the normally invisible exchange equilibrium gets temporarily upset by the acceleration of a charge.

A conspiracy of mainstream string worshipping physics quacks claims that quantum entanglement exists and that the universe can’t be described in terms of Feynman’s simplicity, but this is a lie as exposed by the following facts:

Editorial policy of the American Physical Society journals (including PRL and PRA):

From: Physical Review A [mailto:pra@aps.org]

Sent: 19 February 2004 19:47

To: ch.thompson1@virgin.net

Subject: To_author AG9055 Thompson

Re: AG9055

Dear Dr. Thompson,

… With regard to local realism, our current policy is summarized succinctly, albeit a bit bluntly, by the following statement from one of our Board members:

“In 1964, John Bell proved that local realistic theories led to an upper bound on correlations between distant events (Bell’s inequality) and that quantum mechanics had predictions that violated that inequality. Ten years later, experimenters started to test in the laboratory the violation of Bell’s inequality (or similar predictions of local realism). No experiment is perfect, and various authors invented ‘loopholes’ such that the experiments were still compatible with local realism. Of course nobody proposed a local realistic theory that would reproduce quantitative predictions of quantum theory (energy levels, transition rates, etc.). This loophole hunting has no interest whatsoever in physics.” …’

‘In some key Bell experiments, including two of the well-known ones by Alain Aspect, 1981-2, it is only after the subtraction of ‘accidentals’ from the coincidence counts that we get violations of Bell tests. The data adjustment, producing increases of up to 60% in the test statistics, has never been adequately justified. Few published experiments give sufficient information for the reader to make a fair assessment. There is a straightforward and well known realist model that fits the unadjusted data very well. In this paper, the logic of this realist model and the reasoning used by experimenters in justification of the data adjustment are discussed. It is concluded that the evidence from all Bell experiments is in urgent need of re-assessment, in the light of all the known ‘loopholes’. Invalid Bell tests have frequently been used, neglecting improved ones derived by Clauser and Horne in 1974. ‘Local causal’ explanations for the observations have been wrongfully neglected.’

After her tragic death from cancer in 2006, her website was preserved, where she wrote in defiance of the Physical Review editor man:

http://freespace.virgin.net/ch.thompson1/EPR_Progress.htm:

‘The story, as you may have realised, is that there is no evidence for any quantum weirdness: quantum entanglement of separated particles just does not happen. This means that the theoretical basis for quantum computing and encryption is null and void. It does not necessarily follow that the research being done under this heading is entirely worthless, but it does mean that the funding for it is being received under false pretences. It is not surprising that the recipients of that funding are on the defensive. I’m afraid they need to find another way to justify their work, and they have not yet picked up the various hints I have tried to give them. There are interesting correlations that they can use. It just happens that they are ordinary ones, not quantum ones, better described using variations of classical theory than quantum optics.

‘Why do I seem to be almost alone telling this tale? There are in fact many others who know the same basic facts about those Bell test loopholes, though perhaps very few who have even tried to understand the real correlations that are at work in the PDC experiments. I am almost alone because, I strongly suspect, nobody employed in the establishment dares openly to challenge entanglement, for fear of damaging not only his own career but those of his friends.’

The stringy mainstream still ignores Feynman’s path integrals as being a reformulation of QM (a third option), seeing them instead as QFT: Feynman’s paper ‘Space-Time Approach to Non-Relativistic Quantum Mechanics’, Reviews of Modern Physics, volume 20, page 367 (1948), makes it clear that his path integrals are a reformulation of quantum mechanics which gets rid of the uncertainty principle and all the pseudoscience it brings with it.

Richard P. Feynman, QED, Penguin, 1990, pp. 55-6, and 84:

‘I would like to put the uncertainty principle in its historical place: when the revolutionary ideas of quantum physics were first coming out, people still tried to understand them in terms of old-fashioned ideas … But at a certain point the old fashioned ideas would begin to fail, so a warning was developed that said, in effect, “Your old-fashioned ideas are no damn good when …”. If you get rid of all the old-fashioned ideas and instead use the ideas that I’m explaining in these lectures – adding arrows [arrows = path phase amplitudes in the path integral, i.e. eiS(n)/h-bar] for all the ways an event can happen – there is no need for an uncertainty principle! … on a small scale, such as inside an atom, the space is so small that there is no main path, no “orbit”; there are all sorts of ways the electron could go, each with an amplitude. The phenomenon of interference [by field quanta] becomes very important …’

So classical and quantum field theories differ due to the physical exchange of field quanta between charges. This exchange of discrete virtual quanta causes chaotic interferences to individual fundamental charges in strong force fields. Field quanta induce Brownian-type motion of individual electrons inside atoms, but this does not arise for very large charges (many electrons in a big, macroscopic object), because statistically the virtual field quanta avert randomness in such cases by averaging out. If the average rate of exchange of field quanta is N quanta per second, then the random standard deviation is 100/N1/2 percent. Hence the statistics prove that the bigger the rate of field quanta exchange, the smaller the amount of chaotic variation. For large numbers of field quanta resulting in forces over long distances and for large charges like charged metal spheres in a laboratory, the rate at which charges exchange field quanta with one another is so high that the Brownian motion resulting to individual electrons from chaotic exchange gets statistically cancelled out, so we see a smooth net force and classical physics is accurate to an extremely good approximation.

Thus, chaos on small scales has a provably beautiful simple physical mechanism and mathematical model behind it: path integrals with phase amplitudes for every path. This is analogous to the Brownian motion of individual 500 m/sec air molecules striking dust particles which creates chaotic motion due to the randomness of air pressure on small scales, while a ship with a large sail is blown steadily by averaging out the chaotic impacts of immense numbers of air molecule impacts per second. So nature is extremely simple: there is no evidence for the mainstream ‘uncertainty principle’-based metaphysical selection of parallel universes upon wavefunction collapse. (Stringers love metaphysics.) Dr Thomas Love, who writes comments at Dr Woit’s Not Even Wrong blog sometimes, kindly emailed me a preprint explaining:

‘The quantum collapse [in the mainstream interpretation of quantum mechanics, where a wavefunction collapse occurs whenever a measurement of a particle is made] occurs when we model the wave moving according to Schroedinger (time-dependent) and then, suddenly at the time of interaction we require it to be in an eigenstate and hence to also be a solution of Schroedinger (time-independent). The collapse of the wave function is due to a discontinuity in the equations used to model the physics, it is not inherent in the physics.’

‘… nature has a simplicity and therefore a great beauty.’

– Richard P. Feynman (The Character of Physical law, p. 173)

The double slit experiment, Feynman explains, proves that light uses a small core of space where the phase amplitudes for paths add together instead of cancelling out, so if that core overlaps two nearby slits the photon diffracts through both the slits:

‘Light … uses a small core of nearby space. (In the same way, a mirror has to have enough size to reflect normally: if the mirror is too small for the core of nearby paths, the light scatters in many directions, no matter where you put the mirror.)’

– R. P. Feynman, QED, Penguin, 1990, page 54.

Hence nature is very simple, with no need for the wavefunction collapse or the ‘multiverse’ lie of crackpot Hugh Everett III who wouldn’t even incorporate the physical dynamics of fallout particle sizes and deposition phenomena in his purely statistical paper allegedly predicting fallout casualties:

‘It always bothers me that, according to the laws as we understand them today, it takes a computing machine an infinite number of logical operations to figure out what goes on in no matter how tiny a region of space, and no matter how tiny a region of time. How can all that be going on in that tiny space? Why should it take an infinite amount of logic to figure out what one tiny piece of spacetime is going to do? So I have often made the hypothesis that ultimately physics will not require a mathematical statement, that in the end the machinery will be revealed, and the laws will turn out to be simple, like the chequer board with all its apparent complexities.’

– R. P. Feynman, The Character of Physical Law, November 1964 Cornell Lectures, broadcast and published in 1965 by BBC, pp. 57-8.

Path integrals for fundamental forces in quantum field theory

Richard P. Feynman’s paper ‘Space-Time Approach to Non-Relativistic Quantum Mechanics’, Reviews of Modern Physics, volume 20, page 367 (1948), despite being rejected previously by the Physical Review, is an essential piece of reading. Feynman makes it clear that his path integrals are a reformulation of quantum mechanics, not merely an extension to sweep away infinities in quantum field theory!

Feynman’s model treats the statistical randomness of particle physics by summing all possible paths a particle can take in any interaction (real particles as well as virtual particles such as force-mediating gauge bosons) while assigning a weighting (amplitude) to each path by means of a phase amplitude which can vary for different paths: paths lying near the classical path reinforce automatically while those far from the classical path suffer interference and cancellation.

‘Light … ‘smells’ the neighboring paths around it, and uses a small core of nearby space. (In the same way, a mirror has to have enough size to reflect normally: if the mirror is too small for the core of nearby paths, the light scatters in many directions, no matter where you put the mirror.)’ – R. P. Feynman, QED, Penguin, 1990, page 54.

‘When we look at photons on a large scale … there are enough paths around the path of minimum time to reinforce each other, and enough other paths to cancel each other out. But when the space through which a photon moves becomes too small (such as the tiny holes in the screen), these [classical] rules fail – we discover that light doesn’t have to go in straight lines, there are interferences created by two holes … The same situation exists with electrons: when seen on a large scale, they travel like particles, on definite paths. But on a small scale, such as inside an atom, the space is so small that there is no main path, no ‘orbit’; there are all sorts of ways the electron could go, each with an amplitude. The phenomenon of interference [on small distance scales due to individual field quanta interactions not occurring in sufficiently large numbers to cancel out random chaos, and due to interactions with the pair-production of virtual fermions in the very strong electric fields on small distance scales, where the fields exceed the 1.3*1018 v/m Schwinger threshold electric field strength for pair-production] becomes very important, and we have to sum the arrows to predict where an electron is likely to be.’

– R. P. Feynman, QED, Penguin, 1990, page 84-5.

‘… the ‘inexorable laws of physics’ … were never really there … Newton could not predict the behaviour of three balls … In retrospect we can see that the determinism of pre-quantum physics kept itself from ideological bankruptcy only by keeping the three balls of the pawnbroker apart.’

– Dr Tim Poston and Dr Ian Stewart, ‘Rubber Sheet Physics’, Analog: Science Fiction/Science Fact, Vol. C1, No. 129, Davis Publications, New York, November 1981.

Feynman’s point is that Heisenberg’s uncertainty principle arises from interference between paths a particle can take when virtual particles affect the motion of real particles, and this is very important:

‘I would like to put the uncertainty principle in its historical place: when the revolutionary ideas of quantum physics were first coming out, people still tried to understand them in terms of old-fashioned ideas … But at a certain point the old fashioned ideas would begin to fail, so a warning was developed that said, in effect, “Your old-fashioned ideas are no damn good when …” If you get rid of all the old-fashioned ideas and instead use the ideas that I’m explaining in these lectures – adding arrows [phase amplitudes] for all the ways an event can happen – there is no need for an uncertainty principle!’

– Richard P. Feynman, QED, Penguin, 1990, pp. 55-6.

The uncertainty principle of quantum mechanics itself arises because of interference due to virtual particles.

Feynman is simply adopting Popper’s explanation of the uncertainty principle in this case:

‘… the Heisenberg formulae can be most naturally interpreted as statistical scatter relations [between virtual particles in the quantum foam vacuum and real electrons, etc.], as I proposed [in the 1934 book The Logic of Scientific Discovery]. … There is, therefore, no reason whatever to accept either Heisenberg’s or Bohr’s subjectivist interpretation …’

– Sir Karl R. Popper, Objective Knowledge, Oxford University Press, 1979, p. 303.

(Popper in 1982 added: ‘… the view of the status of quantum mechanics which Bohr and Heisenberg defended – was, quite simply, that quantum mechanics was the last, the final, the never-to-be-surpassed revolution in physics … physics has reached the end of the road.’ – Sir Karl Popper, Quantum Theory and the Schism in Physics, Rowman and Littlefield, NJ, 1982, p. 6. Bohr had been condemned in a letter he received from Rutherford about the Bohr atom, which asked Bohr to explain why the spinning and orbiting electron didn’t radiate and spiral into the nucleus! The true explanation is that in the ground state, the electron receives as much energy from the quantum field vacuum as it radiates, but Bohr didn’t know that so he invented a metaphysical ‘correspondence principle’ and a metaphysical ‘complementarity principle’ which together formed the basis for the 1927 Solvay ‘Copenhagen Interpretation’ of quantum mechanics, which denies that progress in quantum mechanics is impossible, even in principle. Heisenberg sided with Bohr at Solvay in 1927 because his own ‘uncertainty principle’-based matrix mathematics version of quantum mechanics was also physically empty at that time.)

If you subtracted all virtual particle effects from quantum field theory, nothing would be left of physics because all forces are caused by the exchange of virtual particles between real particles. It is because this exchange is chaotic in nature that the Coulomb law is chaotic when dealing with individual charges like individual electrons in an atom. When dealing with large numbers of electrons, the chaotic randomness of individual field quanta exchanges disappears because statistically, with increasingly large numbers of interactions, the situation becomes ever less chaotic and ever more classical in nature. An analogy to this is Brownian motion of small dust particles due to individual impacts from air molecules. Such small dust particles move around chaotically, but larger objects like a ship’s sail experience such a large flux of air molecule interactions that the chaos cancels out and the sail is subject to effectively steadier pressure.

In his lecture ‘This Unscientific Age’, which Feynman gave as part of a series of three lectures under the collective title ‘The Meaning of It All’ in April of 1963 at the University of Washington, he used heavy sarcasm when he rejected outright the concept that probabilities have any physical significance by themselves (probabilities merely quantify the ignorance of human observers):

‘For example, I had the most remarkable experience this evening. While coming in here, I saw licence plate ANZ 912. Calculate for me, please, the odds that of all the licence plates in the state of Washington I should happen to see ANZ 912.’ – Richard P. Feynman, The Meaning of It All, Penguin, London, 1998, p. 81.

Feynman goes on to stress that probabilities are fiction after the event, when we know whether the event occurred or not. The whole concept of probability has nothing to do with reality itself, and is merely an attempt to quantify the ignorance of reality held by some observer. He gives an example in the lecture of a scientist who noticed that a rat took alternative left and right turns while running through a maze and asked Feynman to calculate the remarkably low probability of the event. Feynman replied: ‘… it doesn’t make sense to calculate after the event … you selected the peculiar case. … do another experiment all over again and then see if they alternate. He did, and it didn’t work.’ But people (not just scientists) generally abuse probability theory by calculating pseudo-probabilities after an event has occurred!

Something happens and people like to say it is a one-in-a-million chance, and must therefore have a deep spiritual or metaphysical meaning. In reality, you can calculate such low probabilities for any mundane event, like seeing a particular random plate out of millions at random. Who cares that when you look for number plates and see one, there is just one in a million or less chance that you could have predicted which one you would see in advance? Even when there is statistical evidence that some chance event shows a real correlation between two different things, that doesn’t prove any obvious link between those two things: the classic example is in the book How to Lie with Statistics which reports a definite correlation between the number of storks nests on house roofs in Holland and the number of children in the family of the householder! (This correlation was true, but it didn’t prove the myth that storks deliver babies to families: bigger families simply tended to buy big old houses, which because of their size and age on average had more storks nests on the roof than the typically smaller, newer houses of families with fewer children!)

‘The quantum collapse [in the mainstream interpretation of of quantum mechanics, which has wavefunction collapse occur when a measurement is made] occurs when we model the wave moving according to Schroedinger (time-dependent) and then, suddenly at the time of interaction we require it to be in an eigenstate and hence to also be a solution of Schroedinger (time-independent). The collapse of the wave function is due to a discontinuity in the equations used to model the physics, it is not inherent in the physics.’

– Dr Thomas Love, Departments of Physics and Mathematics, California State University, ‘Towards an Einsteinian Quantum Theory’, preprint emailed to me.

Dr Love points out that the mainstream ‘wavefunction collapse’ Copenhagen interpretation (and all entanglement interpretations) are speculative. He points out that the wavefunction doesn’t physically collapse. There are two mathematical models, the time-dependent Schroedinger equation and the time-independent Schroedinger equation.

Taking a measurement means that, in effect, you switch between which equations you are using to model the electron. It is the switch over in mathematical models which creates the discontinuity in your knowledge. When you take a measurement on the electron’s spin state, for example, the electron is not in a superimposition of two spin states before the measurement. (You merely have to assume that each possibility is a valid probabilistic interpretation, before you take a measurement to check.)

Suppose someone flips a coin and sees which side is up when it lands, but doesn’t tell you. You have to assume that the coin is 50% likely heads up, and 50% likely to be tails up. So, to you, it is like the electron’s spin before you measure it. When the person shows you the coin, you see what state the coin is really in. This changes your knowledge from a superposition of two equally likely possibilities, to reality.

Dr Love states on page 9 of his preprint paper Towards an Einsteinian Quantum Theory: ‘The problem is that quantum mechanics is mathematically inconsistent…’, and compares the two versions of the Schroedinger equation on page 10. The time independent and time-dependent versions disagree and this disagreement nullifies the principle of superposition and consequently the concept of wavefunction collapse being precipitated by the act of making a measurement. The failure of superposition discredits the usual interpretation of the EPR experiment as proving quantum entanglement. In fact, making a measurement always interferes with the system being measured (by recoil from firing light photons or other probes at the object), but that is not justification for the metaphysical belief in wavefunction collapse.

This path integrals formulation is a new theory of quantum mechanics, being an alternative to the mainstream Schroedinger wave description and the Heisenberg’s abstract matrix mechanics. Feynman writes in his 1948 paper:

‘In quantum mechanics the probability of an event which can happen in several different ways is the absolute square of a sum of complex contributions, one from each alternative way. The probability that a particle will be found to have a path x(t) lying somewhere within a region of space time is the square of a sum of contributions, one from each path in the region. The contribution from a single path is postulated to be an exponential whose (imaginary) phase is the classical action (in units of h-bar) for the path in question. The total contribution from all paths reaching x, t from the past is the wave function {Psi}(x, t). …

‘It is a curious historical fact that modern quantum mechanics began with two quite different mathematical formulations: the differential equation of Schroedinger, and the matrix algebra of Heisenberg. The two, apparently dissimilar approaches, were proved to be mathematically equivalent. …

‘This paper will describe what is essentially a third formulation of nonrelativistic quantum theory. This formulation was suggested by some of Dirac’s remarks concerning the relation of classical action to quantum mechanics. A probability amplitude is associated with an entire motion of a particle as a function of time, rather than simply with a position of the particle at a particular time.

‘The formulation is mathematically equivalent to the more usual formulations. There are, therefore, no fundamentally new results. However, there is a pleasure in recognizing old things from a new point of view. Also, there are problems for which the new point of view offers a distinct advantage. …’

Feynman goes on in the paper to obtain his theory from just two postulates, the first being Born’s familiar rule from quantum mechanics (i.e., that the probability of an event is proportional to the square of the wavefunction amplitude), while the second postulate tells you how to calculate the wavefunction contribution from each path:

‘I. If an ideal measurement is performed, to determine whether a particle has a path lying in a region of space-time, then the probability that the result will be affirmative is the absolute square of a sum of complex contributions, one from each path in the region. …

‘II. The paths contribute equally in magnitude, but the phase of their contribution is the classical action (in units of h-bar); i.e., the time integral of the Lagrangian taken along the path.’

Feynman states in a footnote:

‘Throughout this paper the term “action” will be used for the time integral of the Lagrangian along a path. When this path is the one actually taken by a particle, moving classically, the integral should more properly be called Hamilton’s first principle function.’

Above: the path integral performed for the Yukawa field, the simplest system in which the exchange of massive virtual pions between two nucleons causes an attractive force. Virtual pions will exist all around nucleons out to a short range, and if two nucleons get close enough for their virtual pion fields to overlap, they will be attracted together. This is a little like Lesage’s idea where massive particles push charges together over a short range (the range being limited by the diffusion of the massive particles into the ‘shadowing’ regions). (See page 26 of Zee for discussion, and page 29 for integration technique. But we will discuss the components of this and other path integrals in detail below.) Zee comments on the result above on page 26: “This energy is negative! The presence of two … sources, at x1 and x2, has lowered the energy. In other words, two sources attract each other by virtue of their coupling to the field … We have derived our first physical result in quantum field theory.” This 1935 Yukawa theory explains the strong nuclear attraction between nucleons in a nucleus by predicting that the exchange of pions (discovered later with the mass Yukawa predicted) would overcome the electrostatic repulsion between the protons, which would otherwise blow the nucleus apart.

But the way the mathematical Yukawa theory has been generalized to electromagnetism and gravity is the basic flaw in today’s quantum field theory: to analyze the force between two charges, located at positions in space x1 and x2, the path integral is done including only those two charges, and just ignoring the vast number of charges in the rest of the universe which – for infinite range inverse-square law forces – are also exchanging virtual gauge bosons with x1 and x2!

A potential energy which varies inversely with distance implies a force which varies as the inverse-square law of distance, because work energy W = Fr, where force F acts over distance r, hence F = W/r, and since energy W is inversely proportional to r, we get F ~ 1/r2. (Distances x in the integrand result in the radial distance r in the result for potential energy above.) In the case of gravity and electromagnetism, the effective mass of the gauge boson in this equation m ~ 0, which gets rid of the exponential term (spin-2 gravitons are supposed to have mass to enable graviton-graviton interactions to enhance the strength of the graviton interaction at high energy in strong fields enough to “unify” the strength of gravity with standard model forces near the Planck scale – an assumption about “unification” which is physically in error (see Figures 1 and 2 in the blog post https://nige.wordpress.com/2007/07/17/energy-conservation-in-the-standard-model/) – and additionally, we’ve shown why spin-2 gravitons are based on error and anyway in the standard model all mass arises from an external vacuum “Higgs” field and is not intrinsic). The exponential term is however important in the short-range weak and strong interactions. Weak gauge bosons are supposed to get their mass from some vacuum (Higgs) field, while massless gluons cause a pion-mediated attraction of nucleons, where the pions have mass so the effective field theory for nuclear physics is of the Yukawa sort.

A path integral calculates the amplitude for an interaction which can occur by a variety of different possible paths through spacetime. The numerator of the path integral integrand above is derived from the phase factor, representing the relative amplitude of a particular path, is simply the exponential term e–iHT = eiS where H is the Hamiltonian (which for the free-particle of mass m is simply representing kinetic energy of the particle, H = E = p2/(2m) = (1/2)mv2; in the event of there being a force-field present, the Hamiltonian must subtract the potential energy V, due to the force field, from the kinetic energy: H = p2/(2m) – V), T is time, and S is the action for the particular path measured in quantum action units of h-bar (the action S is the integral of the Lagrangian field equation over time for a particular path, S = òL dt).

The origin of the phase factor for the amplitude of a particular path, e–iHT, is simply the time-dependent Schroedinger equation of quantum mechanics: i{h-bar}d{Psi}/dt = H, where H is the Hamiltonian (energy operator). Solving this gives wavefunction amplitude, {Psi} = e–iHT/h-bar, or simply e–iHT if we express HT in units of h-bar. Behind the mathematical symbolism, it’s extremely simple physics, just being a description of the way that waves can reinforce if in phase and adding together, or cancel out if they are out of phase.

The denominator of the path integral integrand above is derived from the propagator, D(x), which Zee on page 23 describes as being: ‘the amplitude for a disturbance in the field to propagate from the origin to x.’ This amplitude for calculating a fundamental force using a path integral is constructed using Feynman’s basic rules for conservation of momentum (see page 53 of Zee’s 2003 QFT textbook).

1. Draw the Feynman diagram for the basic process, e.g. a simple tree diagram for Møller scattering of electrons via the exchange of virtual photons.

2. Label each internal line in the diagram with a momentum k and associate it with the propagator i/(k2 – m2 + ie). (Note that when k2 = m2, momentum k is “on shell” and is the momentum of a real, long-lived particle, but k can also take many values which are “off shell” and these represent “virtual particles” which are only indirectly observable from the forces they produce. Also note that ie is infinitesimal and can be dropped where k2 – m2 is positive, see Zee page 26.)

3. Associate each interaction vertex with the appropriate coupling constant for the type of fundamental interaction (electromagnetic, weak, gravitational, etc.) involved, and set the sum of the momentum going into that vertex equal to the sum of the momentum going out of that vertex.

4. Integrate the momentum associated with internal lines over the measure d4k/(2p)4.

Clearly, this kind of procedure is feasible when a few charges are considered, but is not feasible at step 1 when you have to include all the charges in the universe! The Feynman diagram would be way too complicated if trying to include 1080 charges, which is precisely why we have used geometry to simplify the graviton exchange path integral when including all the charges in the universe.

Zee gives some interesting physical descriptions of the way that forces are mediated by the exchange of virtual photons (which seem to interact in some respects like real photons scattering off charges to impart forces, or being created at a charge, propagating to another charge, being annihilated on absorption by that charge, with a fresh gauge boson then being created and propagating back to the first charge again) on pages 20, 24 and 27:

“Somewhere in space, at some instant in time, we would like to create a particle, watch it propagate for a while, then annihilate it somewhere else in space, at some later instant in time. In other words, we want to create a source and a sink (sometimes referred to collectively as sources) at which particles can be created and annihilated.” (Page 20.)

“We thus interpret the physics contained in our simple field theory as follows: In region 1 in spacetime there exists a source that sends out a ‘disturbance in the field’, which is later absorbed by a sink in region 2 in spacetime. Experimentalists choose to call this disturbance in the field a particle of mass m.” (Page 24.)

“That the exchange of a particle can produce a force was one of the most profound conceptual advances in physics. We now associate a particle with each of the known forces: for example, the [virtual, 4-polarizations] photon with the electromagnetic force, and the graviton with the gravitational force; the former is experimentally well established [virtual photons push measurably nearby metal plates together in the Casimir effect] and the latter, while it has not yet been detected experimentally, hardly anyone doubts its existence. We … can already answer a question smart high school students often ask: Why do Newton’s gravitational force and Coulomb’s electric force both obey the 1/r2 law?

“We see from [E = -(e–mr)/(4pr)] that if the mass m of the mediating particle vanishes, the force produced will obey the 1/r2 law.” (Page 27.)

The problem with this last claim Zee makes is that mainstream spin-2 gravitons are supposed to have mass, so gravity would have a limited range, but this is a trivial point in comparison to the errors already discussed in mainstream (spin-2 graviton) approaches to quantum gravity. Zee in the next chapter, Chapter I.5 “Coulomb and Newton: Repulsion and Attraction”, gives a slightly more rigorous formulation of the mainstream quantum field theory for electromagnetic and gravitational forces, which is worth study. It makes the same basic error as the 1935 Yukawa theory, in treating the path integral of gauge bosons between only the particles upon which the forces appear to act, thus inaccurately ignoring all the other particles in the universe which are also contributing virtual particles to the interaction!

Because of the involvement of mass with the propagator, Zee uses a trick from Sidney Coleman where you work through the electromagnetic force calculation using a photon mass m and then set m = 0 at the end, to simplify the calculation (to avoid dealing with gauge invariance). Zee then points out that the electromagnetic Lagrangian density L = -(1/4)FmnFmn (where Fmn = 2dAmn = dmAn – dnAm, Am(x) being the vector potential) has an overall minus sign in the Lagrangian so that action is lost when there is a variation in time! Doing the path integral with this negative Lagrangian (with a small mass added to the photon to make the field theory work) results in a positive sign for the potential energy between two lumps of similar charge, so: “The electromagnetic force between like charges is repulsive!” (Zee, page 31.)

This is quite impressive and tells us that the quantum field theory gives the right result without fiddling in this repulsion case: two similar electric charges exchange gauge bosons in a relatively simple way with one another, and this process, akin to people firing objects at one another, causes them to repel (if someone fires something at you, they are forced away from you by the recoil and you are knocked away from them when you are hit, so you are both forced apart!). Notice that such exchanged virtual photons must be stopped (or shielded) by charges in order to impart momentum and produce forces! Therefore, there must be an interaction cross-section for charges to physically absorb (or scatter back) virtual photons, and this fact offers a simple alternative formulation of the Coulomb force quantum field theory using geometry instead of path integrals!

Zee then goes on to gravitation, where the problem – from his perspective – is how to get the opposite result for two similar-sign gravitational charges than you get for similar electric charges (attraction of similar charges, not repulsion!). By ignoring the fact that the rest of the mass in the universe is of like charge to his two little lumps of energy, and so is contributing gravitons to the interaction, Zee makes the mainstream error of having to postulate a spin-2 graviton for exchange between his two masses (in a non-existent, imaginary empty universe!) just as Fierz and Pauli had suggested back in 1939.

At this point, Zee goes into fantasy physics, with a spin-2 graviton having 5 polarizations being exchanged between masses to produce an always attractive force between two masses, ignoring the rest of the mass in the universe.

It’s quite wrong of him to state on page 34 that because a spin-2 graviton Lagrangian results in universal attraction for a totally false, misleading path integral of graviton exchange between merely two masses, “we see that while like [electric] charges repel, masses [gravitational charges] attract.” This is wrong because even neglecting the error I’ve pointed out of ignoring gravitational charges (masses) all around us in the universe, Zee has got himself into a catch 22 or circular argument: he first assumes the spin-2 graviton to start off with, then claims that because it would cause attraction in his totally unreal (empty apart from two test masses) universe, he has explained why masses attract. However, the only reason why he assumes a spin-2 graviton to start off with is because that gives the desired properties in the false calculation! It isn’t an explanation. If you assume something (without any physical evidence, such as observation of spin-2 gravitons) just because you already know it does something in a particular calculation, you haven’t explained anything by then giving that calculation which merely is the basis for the assumption you are making! (By analogy, you can’t pull yourself up in the air by tugging at your bootstraps.)

This perversion of physical understanding gets worse. On page 35, Zee states:

“It is difficult to overstate the importance (not to speak of the beauty) of what we have learned: The exchange of a spin 0 particle produces an attractive force, of a spin 1 particle produces a repulsive force, and of a spin 2 particle an attractive force, realized in the hadronic strong interaction, the electromagnetic interaction, and the gravitational interaction, respectively.”

Notice the creepy way that the spin-2 graviton – completely unobserved in nature – is steadily promoted in stature as Zee goes through the book, ending up the foundation stone of mainstream physics, string theory:

‘String theory has the remarkable property of predicting [spin-2] gravity.’ – Professor Edward Witten (M-theory originator), ‘Reflections on the Fate of Spacetime’, Physics Today, April 1996.

“For the last eighteen years particle theory has been dominated by a single approach to the unification of the Standard Model interactions and quantum gravity. This line of thought has hardened into a new orthodoxy … It is a striking fact that there is absolutely no evidence whatsoever for this complex and unattractive conjectural theory. There is not even a serious proposal for what the dynamics of the fundamental ‘M-theory’ is supposed to be or any reason at all to believe that its dynamics would produce a vacuum state with the desired properties. The sole argument generally given to justify this picture of the world is that perturbative string theories have a massless spin two mode and thus could provide an explanation of gravity, if one ever managed to find an underlying theory for which perturbative string theory is the perturbative expansion.” [Emphasis added.]

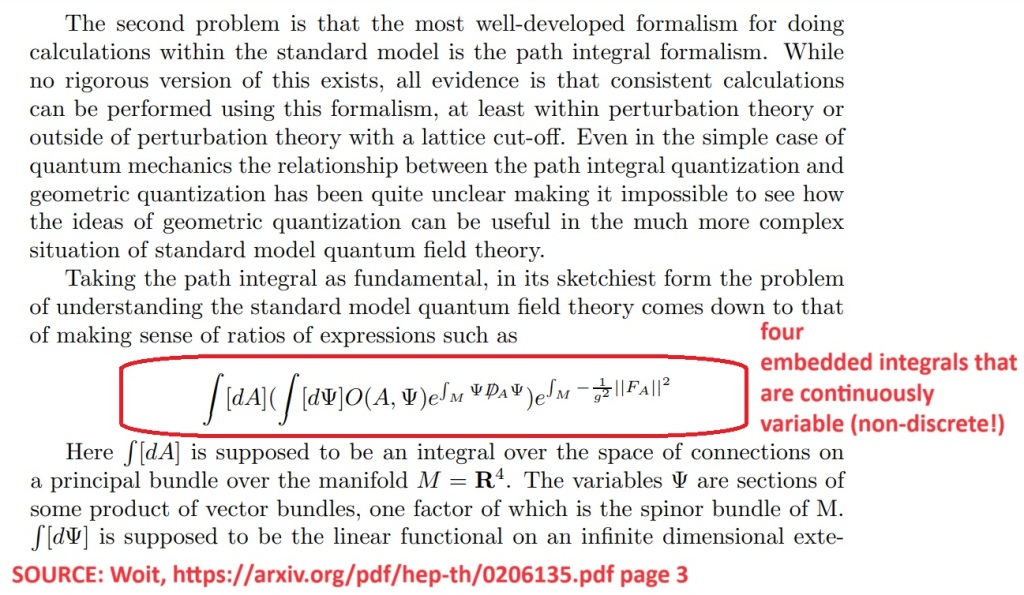

– Dr Peter Woit, http://arxiv.org/abs/hep-th/0206135, page 52.

Fig. 1: this illustration makes clear the obfuscation of path integrals given by the mainstream (left side illustration showing curved path histories from location A to location, as shown in Wikipedia’s ‘Path integral formulation’ article) and the true summing of path histories (right side illustration, based on Richard P. Feynman’s 1985 book, QED, showing that there are two things being summed over physically: firstly all the geometric interaction graphs for a single particular type of Feynman interaction diagram to be implemented physically over all geometric possibilities, weighted for interferences, and secondly, a wide range of different Feynman diagrams: this is why the path integral contains an integral for determining the least action such as the minimum force or the least time, as well as being an integral over all kinds of Feynman diagrams).

In classical theories like general relativity, there are no quantum (discrete) interactions being modelled, so the effect of lots of discrete deflections of a photon by gravitons must be represented by a curved line. This is not the true picture. Once you get rid of classical theories altogether, all curvatures are composed of a lot of little deflections due to discrete (quantized) interactions. Inbetween interactions, Newton’s 1st law of motion determines the path of the particle (be it a real particle or a virtual one): it goes in a straight line. There is no curvature. TLike bad textbooks, the Wikipedia article falsely claims:

“In calculating the amplitude for a single particle to go from one place to another in a given time, it would be correct to include histories in which the particle describes elaborate curlicues, histories in which the particle shoots off into outer space and flies back again, and so forth.” [Emphasis added.]

This false belief is also given in many books about path integrals. It is false because, in a true quantum field theory, the whole point is that all accelerations are due to the summation of many little field quanta interactions (e.g. graviton exchange interactions), not a curved spacetime, which is just an approximation for large numbers of interactions! It’s incorrect to include curvature in a quantum field theory, except where the curvature is really a lot of little discrete steps (straight lines joined by vertices where interactions with gravitons or whatever occur). In a quantum field theory, all accelerating motion is composed of lots of discrete interactions such as gauge bosons exchanged (scattered between) charges, as depicted by the following Feynman diagrams:

Fig. 2: Feynman diagrams for classical general relativity and for two different graviton spins.

Similarly, the vast number of impacts of air molecules on the sail when windsurfing gives what approximates to a continuous, smooth force; but it’s due to large numbers (reduce the size of the sail to 5 microns across, and it will move in a completely non-classical, chaotic way due to successive random individual impacts of air molecules, a process called Brownian motion).

One way that some paths could appear to be chaotic and not straight lines is if loops appear along them (bottom of right hand side of Fig. 1 illustration above). Loops are the process of bosonic radiation transforming into pairs of virtual fermions of opposite charge which exist for a brief period and cause effects (like becoming polarized in the field which creates them, and therefore shielding that field slightly by absorbing energy from it, and causing the deflection of moving particles which encounter them) before annihilating back into bosonic radiation. However, this doesn’t cause curvature, it just causes zig-zag deflections in path trajectories, with each vertex corresponding to an interaction with virtual fermions. This doesn’t occur in any case in weak fields, because Schwinger proved that in a steady electric field you need a field strength exceeding 1.3*10^18 volts/metre in order to get pair-production and annihilation (loops). Below that immense field strength, there are no loops.

For this reason, when you apply path integrals for most purposes like low-energy physics (understanding the quantum field theory for gravity and electromagnetism in everyday situations, for example), the paths are always straight lines between quantum interactions due to Newton’s 1st law of motion, as Feynman shows in his book QED. In low-energy physics, you’re summing a lot of simple straight line interaction histories, with the only complexity being that the vertex for the interaction can occur in various possible places in spatial geometry.

E.g., in the Fig. 1 diagram above, the light ray gets deflected – by a quantum interaction – at the water surface, and because the water surface extends over a region of space, this means that you have to take account of the light ray interacting at any location on that water surface. Weighting each interaction path according to the principle of least action (least time in this situation) and integrating them tells you the effective path taken, which is the path that takes the least time to complete. Generally nature is thrifty: this is the principle of least action. When light travels through a path containing air and water (it travels slower through water than air), it appears to take the quickest route possible. This gives the refraction of light by water. Feynman’s while analysis was inspired by the simple question: how does the photon know in advance of reaching the water surface, the best direction to go in order to minimise the time taken? It doesn’t, as Feynman explains: it tries to take all routes possible (all straight lines, not wavy curves, because Newton’s 1st law of motion holds inbetween quantum interactions!), but many of those routes cancel out as a result of interferences between the wave phases for those nearby virtual photons.

This is similar to what happens if you have two radio transmitter antenna and feed a signal into one and an inverted version of that signal into the other! The usual situation of a power transmission line with two conductors carrying alternating current is of this sort. The two radio waves superimpose to perfectly cancel each other out as seen from large distances, but when you are close to either antenna (within a range of up to a few times the distance between the antenna) the phases of the radio waves don’t perfectly interfere, and so very important effects – physically causing what is described by Maxwel’s equation for ethereal “displacement current” in a capacitor with vacuum as the dielectric, while it charges up or discharges – do actually occur. Because each of the two conductors in your mains power flex carries an inverted signal of that in the other, there is cancellation of the radiated 50 Hertz signal as seen from long distances. At short distances you can detect the 50 Hertz signal, because you’re near enough that the interference is not perfect (i.e., one conductor is significantly closer to you than the other). Also, if the power flex is near something conductive, the conductor reflects radio waves back that may prevent perfect interference, so some 50 Hz radio waves escape, causing a slight electric power loss. But generally, alternating current doesn’t result in power cords acting as 50 Hz antennas. This is because of phase vector cancellations, similar in general principle to the cancellation of paths far from that of least action in the path integral of quantum field theory.

Feynman states on a footnote printed on pages 55-6 of his book QED (Penguin, London, 1990):